IMPORTANT DISCLAIMER: This is educational content only, not legal, technical, or compliance advice. HIPAA regulations are complex and subject to change. You must consult with qualified HIPAA compliance attorneys, certified privacy professionals, and security experts before implementing any compliance strategy. This article does not create an attorney-client relationship.

Last updated: November 20, 2025 – Regulations and best practices evolve. Always verify current requirements with legal counsel.

Last year, I watched a promising AI startup face serious regulatory challenges. [Note: This is a composite example based on common compliance failures, not a specific real company.]

They’d built a diagnostic algorithm that actually worked—the kind that could genuinely save lives. The tech was solid. The team was smart. But they made one mistake that created major problems.

Someone on the team uploaded a CSV file with patient data into ChatGPT for “quick testing.”

That’s it. HIPAA violation. Serious consequences followed.

When you’re building AI for healthcare, you’re not just writing code. You’re handling data that’s regulated six ways from Sunday. The Health Insurance Portability and Accountability Act isn’t some bureaucratic checkbox—it’s the difference between having a business and facing multi-million dollar fines.

I’ve spent over a decade navigating these regulations, and here’s what most developers miss: AI doesn’t get special treatment.

Table of Contents

What HIPAA Actually Means for AI Developers

A lot of engineers think HIPAA only applies to hospitals or insurance companies. Wrong.

If your code touches patient data in any way—processing it, storing it, even just accessing it—you’re on the hook. HIPAA came out in 1996, way before anyone was talking about LLMs or transformer models. But regulators don’t care. Old rules, new technology. Deal with it.

Why does this matter specifically for AI? Because AI is hungry. It needs data. Lots of it. Whether you’re using RAG to pull context or training models on massive datasets, the moment that data includes identifiable health information, HIPAA kicks in.

⚠️ Always consult with a HIPAA compliance expert to determine your specific obligations based on your use case.

PHI and Data Handling Requirements

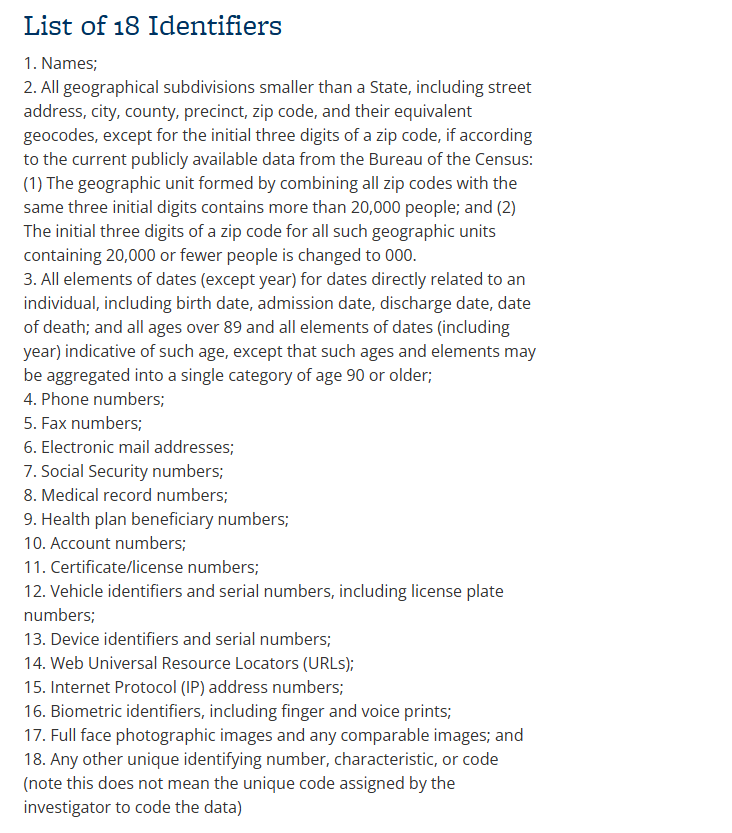

PHI isn’t just medical records. It’s anything that can identify a patient combined with their health data. There are 18 specific identifiers, and developers constantly trip over these:

- Names (obviously)

- Dates beyond just the year—birth dates, appointment dates, admission dates

- IP addresses (yes, really)

- Biometric data like fingerprints or voice prints

- Photos showing someone’s face

- Any unique identifier, including device IDs

The “Minimum Necessary” Rule

This is the golden rule: Don’t take what you don’t need.

For example, if your AI is predicting sepsis risk, does it need the patient’s name or address? No. So, don’t ingest it. The less data you process, the smaller your “blast radius” if a breach occurs.

⚠️ Work with legal counsel to document your minimum necessary analysis for your specific application.

Risks of Non-Compliance

Consequences and Penalties of Patient Privacy Breaches

Now, let’s talk money—because HIPAA violations can be extremely costly.

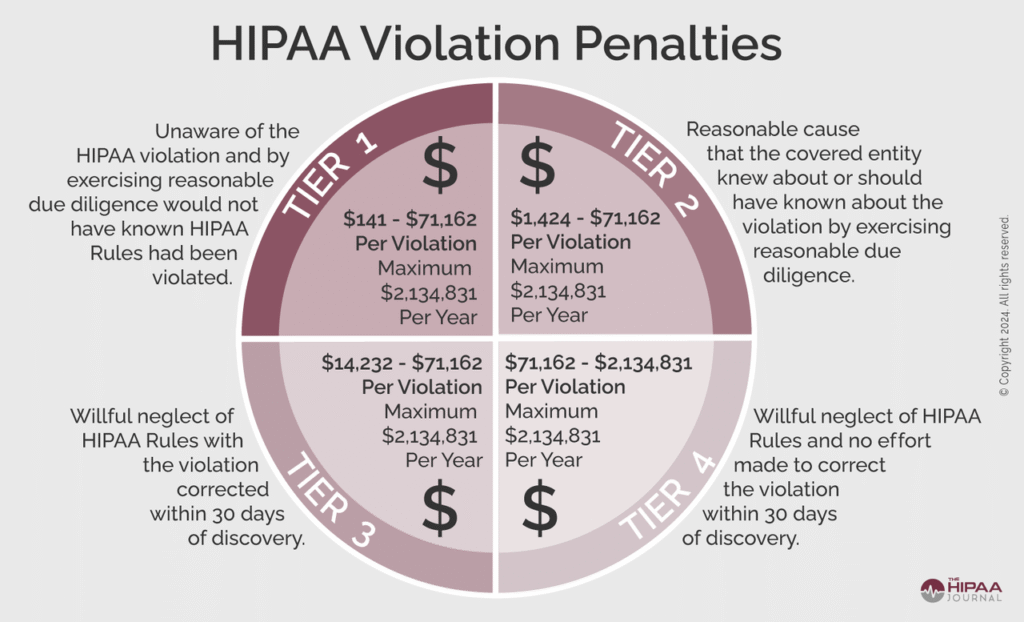

HIPAA penalties (as of 2024) are tiered based on the level of negligence:

- Tier 1 (Did not know): $141 per record

- Tier 4 (Willful Neglect – uncorrected): Minimum $71,162 per violation

Note: These penalty amounts are adjusted annually for inflation. Verify current penalty levels with HHS Office for Civil Rights.

And here’s the kicker—these fines are per patient record. If your system leaks 50,000 records, you could be looking at over $2 million in penalties, depending on the violation. Real-world HIPAA enforcement examples from the U.S. Department of Health and Human Services (HHS) illustrate just how seriously these breaches are treated.

It’s not just about money, though. The Department of Justice (DOJ) can pursue criminal charges for intentional misuse of PHI in specific circumstances, including:

- Knowingly obtaining or disclosing PHI without authorization

- Obtaining PHI under false pretenses

- Using or disclosing PHI with intent to sell, transfer, or use for commercial advantage, personal gain, or malicious harm (up to 10 years imprisonment)

Important: Criminal prosecution typically requires proof of willful intent and is reserved for the most egregious violations. Most compliance issues are handled civilly. Consult with legal counsel to understand the specific circumstances that could trigger criminal investigation.

And, of course, your reputation will take a massive hit. No healthcare institution will partner with a vendor known for data leaks.

Designing AI Applications for HIPAA Compliance

Data De-Identification and Encryption

This is your best defense. If the data isn’t PHI, HIPAA doesn’t apply.

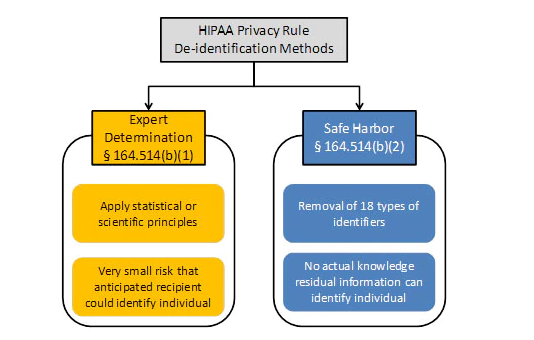

There are two primary paths for de-identification:

- Safe Harbor Method: Remove all 18 identifiers. This is the safest option for training data.

- Expert Determination: This method requires hiring a qualified statistician with expertise in re-identification risk assessment to formally determine if the risk of re-identification is sufficiently low. This is a complex, time-consuming, and potentially expensive process that requires extensive documentation and ongoing monitoring. It is not a simple shortcut. This option may be necessary when certain data points (like dates or zip codes) are clinically essential.

For detailed methods, see the HHS guidance on de-identification of PHI. Always work with qualified experts and legal counsel when implementing de-identification strategies.

Encryption: A Non-Negotiable Requirement

You need encryption for data at rest and in transit (as of best practices in 2024-2025):

- At rest: Everything—databases, model weights that contain PHI, backups. AES-256 is currently standard. Check out NIST SP 800-111 for storage encryption guidelines. Note: Encryption standards evolve. Verify current NIST recommendations.

- In transit: TLS 1.2 or higher (TLS 1.3 recommended as of 2025) for all API calls, microservices, everything. NIST SP 800-52r2 covers TLS best practices. Older versions like TLS 1.0 and 1.1 are deprecated and should not be used.

Encryption means even if someone steals your hardware, they can’t read the data.

⚠️ Consult with cybersecurity professionals to ensure your encryption implementation meets current standards and your specific risk profile.

Working with Third-Party AI Services

Business Associate Agreements (BAAs)

One of the most common mistakes I see is developers using a third-party API (e.g., OpenAI, Anthropic, Google) without checking the terms and conditions.

Standard consumer terms usually allow the vendor to train on your data. If you’re sending patient data to a third-party service without a Business Associate Agreement (BAA), you’ve just violated HIPAA.

BAAs are essential—they ensure the vendor assumes liability for protecting PHI. Major providers like OpenAI, AWS, Azure, and Google Cloud offer BAAs for enterprise clients (as of late 2024/early 2025):

Important: BAA availability, terms, and covered services change frequently. Always verify current offerings directly with vendors:

- OpenAI, Anthropic, and other AI providers: Check their enterprise/healthcare offerings directly

No BAA? No PHI. Period.

⚠️ Have your legal team review all BAAs before signing. Not all services covered under a BAA are automatically HIPAA-compliant—you must also configure and use them correctly.

Best Practices and Checklist for HIPAA Compliance

Ongoing Monitoring and Staff Training

Phishing and social engineering remain the most common ways hackers infiltrate systems. You must conduct annual HIPAA training tailored specifically for AI developers:

- Ensure staff understand why they can’t input patient data into AI services like ChatGPT without proper BAAs and safeguards

- Ensure they don’t download data to their personal devices.

- Update training materials annually to reflect new threats and regulatory guidance

For broader AI-specific risk management (complementary to HIPAA), consider the NIST AI Risk Management Framework.

⚠️ Work with compliance professionals to develop training programs appropriate for your organization.

Monitor in Real-Time

Set up anomaly detection. If someone’s downloading massive amounts of patient data at 3 AM on a Sunday, you need to know.

Regular Audits and Risk Testing

Conduct penetration testing to ensure your AI models are secure and that no PHI can be extracted through model inversion attacks. Regularly assess and document your data flow and risks.

⚠️ Engage qualified third-party security auditors to validate your compliance posture.

Final Thoughts

Building HIPAA-compliant AI is hard. But it’s also a competitive advantage. Healthcare organizations want to work with vendors they can trust.

Don’t treat HIPAA like a checklist you fill out once and forget. Build compliance into your product from day one. Keep your data handling clean. And for the love of god, get that BAA before you send any API requests.

Your future self will thank you.

FINAL DISCLAIMER: This article does not constitute legal, technical, compliance, financial, or professional advice of any kind. HIPAA regulations are complex, frequently updated, and subject to interpretation. Penalties, technical standards, and best practices change over time. The information provided here may become outdated. You must work with qualified HIPAA compliance attorneys, certified privacy professionals (such as Certified in Healthcare Privacy and Security – CHPS), and information security experts to develop and maintain a compliant program appropriate for your specific circumstances. The author and publisher assume no liability for actions taken based on this information.