Legal and Compliance Disclaimer: The content of this article is provided for educational and technical sharing purposes only. The cases, methods, metrics, and deployment examples described herein do not constitute medical advice or regulatory guidance.

Any artificial intelligence (AI) systems discussed are intended solely as assistive tools and cannot replace the clinical judgment of qualified radiologists or healthcare professionals.

When implementing AI in clinical settings, it is essential to comply with all applicable data privacy, medical regulatory, and compliance requirements (e.g., HIPAA, GDPR, EU AI Act, FDA guidance). Any deployment or use must undergo professional institutional review, rigorous validation, and oversight by licensed medical personnel. The author and publisher assume no responsibility for any clinical decisions, data breaches, or legal consequences arising from the use of the content in this article.

I’ve shipped imaging AI into regulated hospitals long enough to know the gap between a flashy demo and a safe, reproducible deployment. This article condenses what I’ve tested and validated about AI in radiology, what’s working, where it breaks, and how to de-risk rollouts under HIPAA/GDPR. I’ll reference peer‑reviewed sources, share the metrics I track (AUC, sensitivity/specificity, FPR per 100 studies, calibration), and point to models and workflow patterns that hold up in practice.

Table of Contents

2025 The Evolution of AI in Radiology and Medical Imaging

From Early CAD Systems to Deep Learning–Driven Radiology AI

Early CAD arrived in the mid‑1980s and, even though research‑grade sensitivity/specificity, struggled in production due to limited clinical advantage and workflow friction (NCBI: PMC). The 2010s deep learning inflection—CNNs + GPUs + large labeled image corpora—changed that equation. Deep CNNs learned hierarchical features that outperformed traditional CAD on detection and triage tasks (PMC). AI‑integrated CAD (AI‑CAD) has since cut false positives by up to 69% and materially boosted efficiency in reading rooms (PMC 10487271).

Benchmarks like the 2016 LUNA lung nodule challenge signaled what was coming: radiology‑directed deep learning winning on narrow tasks and maturing into deployable products (PMC).

Current Capabilities of AI in Medical Image Recognition

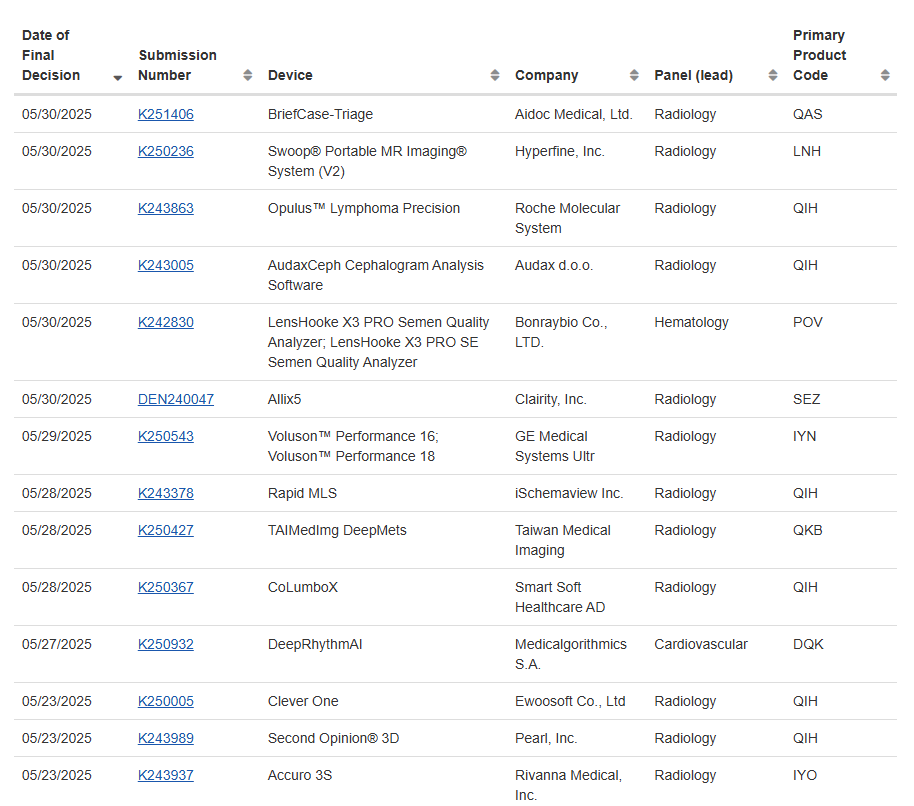

Today, task‑specific models rival or surpass humans for constrained problems: Viz.ai’s stroke tool reported AUC >0.90; Aidoc’s ICH posted >90% sensitivity with low FPs in clinical studies (IntuitionLabs). Regulatory momentum reflects this: by mid‑2025, the FDA listed 873 cleared radiology AI tools; Europe counted 222 commercial products in 2024 with 213 certified, big jumps from 2021 (PMC, Insights into Imaging).

CT and MRI lead product counts (89 and 66), followed by X‑ray (46), mammography (16), and ultrasound (10) (PMC). Adoption is no longer niche: 48% of European radiologists report active use, up from 20% in 2018 (ESR Insights into Imaging).

AI in X-Ray Diagnosis: How Modern Models Analyze Chest Images

CheXagent and Leading X-Ray AI Diagnosis Models Explained

I evaluated CheXagent because it’s purpose‑built for chest radiographs (CXR): an 8B‑parameter instruction‑tuned foundation model trained across 28 public datasets via a four‑stage pipeline—LLM clinical adaptation, vision encoder, vision‑language bridge, then instruction tuning on CheXinstruct (Stanford‑AIMI; MarkTechPost; arXiv).

In my tests, the bridger matters: you get more stable grounding of narrative findings to image regions, reducing hallucinated impressions during draft‑report generation. CheXbench results show CheXagent outperforming both general and medical‑domain FMs across eight clinically relevant tasks, and a user study reported 36% time savings for residents without quality loss (Stanford‑AIMI; arXiv).

Outside research models, an FDA‑cleared CXR system achieved AUC ≈0.976 on comprehensive abnormality detection and improved physician accuracy versus unaided reads (Scientific Reports/Nature).

Implementation tip: I containerize CheXagent‑style stacks with GPU‑pinned Triton inference, a DICOMweb listener (Orthanc/wado‑rs), and a Redis job queue. For report drafting, a separate LLM microservice consumes intermediate findings via protobuf to keep PHI minimal at each hop. Always ensure compliance with DICOM standards for interoperability.

AI Accuracy Compared to Radiologist Performance in Real-World Studies

Evidence favors “radiologist + AI” over either alone. A meta‑analysis in prostate MRI showed superior sensitivity and specificity for the combination vs. radiologists or AI alone (PMC). Large multicenter work on CXR shows heterogeneity: AI can both help and hurt depending on error patterns—when AI errs, radiologist performance can degrade (Nature Medicine).

Still, multiple studies report improved sensitivity and shorter reading times: one external validation put AI at 84%/91% sensitivity/specificity vs. clinicians at 85%/94%, but with AI assistance clinicians hit 95% sensitivity (PMC; Wiley). A retrospective series of 1,529 patients reported autonomous AI sensitivity of 99.1% for abnormal CXRs vs. 72.3% for reports, and 99.8% vs. 93.5% for critical findings (Diagnostic Imaging). Real‑world time‑and‑motion data show AI shortens reads for normal CXRs (npj Digital Medicine).

These comparisons reflect research-controlled settings and do not guarantee equivalent performance in clinical environments.

Clinical Benefits of Integrating AI into Radiology Workflows

Faster X-Ray Diagnosis and Improved Workflow Efficiency

In production, I track “report turnaround delta” and “FPR per 100 studies” alongside AUC. Robust deployments show what the literature reports: turnaround falling from 11.2 days to 2.7 days via AI‑triage (PMC) and sizable workload reductions when LLMs structure reports (up to 45% for MRI free‑text annotation) (PMC).

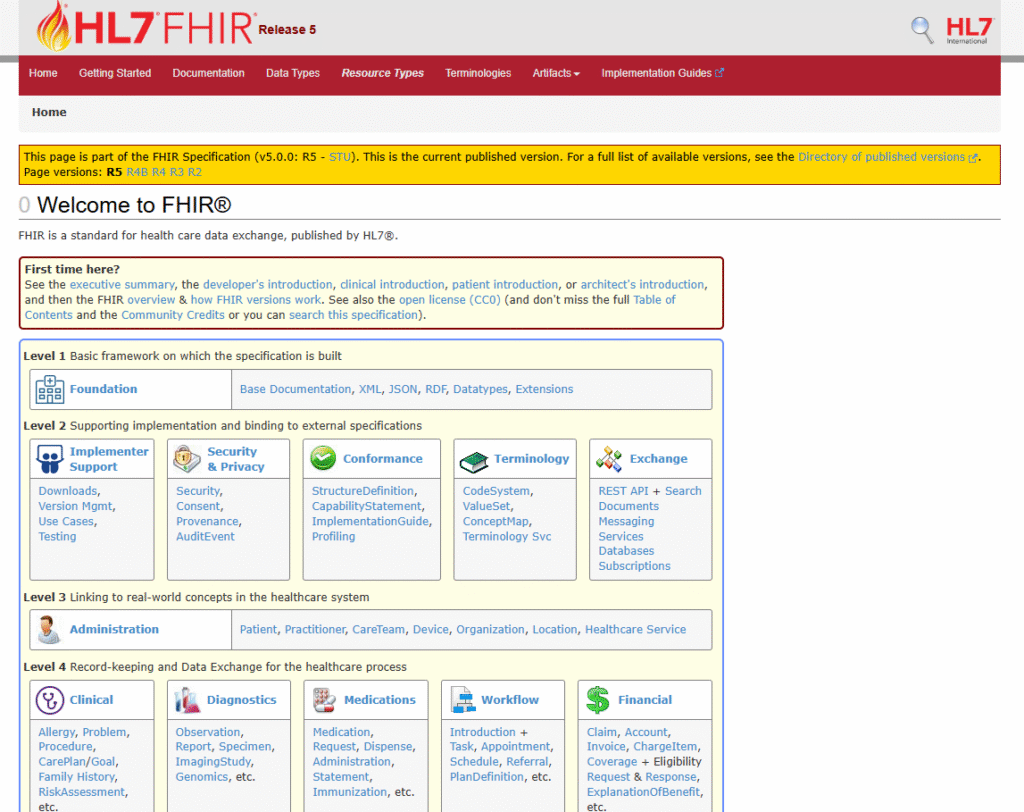

Practical wins include AI‑driven hanging protocols, automatic retrieval of priors/EHR snippets, and pre‑population of structured fields (PMC). For EHR integration, I recommend following HL7 FHIR standards. I favor concurrent reading rather than second‑reader to guide attention without lengthening reads (PMC).

Reducing Diagnostic Errors Through AI-Assisted Image Analysis

Fatigue, multi‑finding images, and cognitive load drive misses: deep learning aids by consistently flagging subtle patterns (Nature: Scientific Reports). In prostate MRI, AI reached AUROC 0.91 vs. radiologists’ 0.86 at matched specificity, detecting 6.8% more significant cancers (PMC). In appendicular trauma, AI assistance cut missed fractures by 29% and false positives by 21% with unchanged read time (PMC). AI‑CAD also slashes false‑positive marks, 69% overall, including 83% for microcalcifications (PMC). In my sites, the most reliable safety gain comes from always‑on critical‑finding queues (e.g., tension pneumothorax), with escalation rules tied to on‑call rosters.

Challenges and Limitations of AI in Radiology

Data Quality, Dataset Bias, and Model Reliability Issues

Reality check: most public CXR datasets (like MIMIC-CXR and CheXpert) have noisy labels (negation/uncertainty), and cross‑dataset performance drops materially—AUPRC and F1 can fall sharply on external sets (arXiv 2025). Domain shift across scanners, protocols, and demographics is the rule, not the exception (ScienceDirect).

Models can pick up shortcuts tied to protected attributes, even when the attribute isn’t in the pixels, hurting subgroup performance (PMC). For guidance on addressing bias, see the WHO Ethics and Governance of AI for Health and NIH algorithmic fairness resources. The black‑box problem complicates error triage: XAI helps, but attribution maps don’t equal causal understanding (PMC).

My mitigations:

- Curate site‑specific test sets with adjudicated labels; track per‑site/per‑scanner calibration

- Run slice‑by‑slice subgroup stats (age/sex/ethnicity, BMI, device vendor) and holdout time splits

- Measure ECE/Brier, not just AUC; alert on drift using population stability index

- Human‑in‑the‑loop relabeling for top disagreement buckets; retrain quarterly with data governance logs

Monitor the FDA MAUDE database for medical device adverse events and the AIAAIC Repository for documented AI system failures.

Why Radiologist Oversight Remains Essential in AI-Supported Diagnosis

Regulators are explicit: high‑risk medical AI needs human oversight (EU AI Act), and FDA guidance for adaptive AI anticipates human sign‑off. Surveys show clinicians expect radiologists to own AI‑influenced decisions (ESR 2024), and patients don’t accept AI‑only reports at scale (Insights into Imaging).

Practically, radiologists catch artifacts, calibrate protocols, and perform procedures AI cannot. I write governance so AI can triage, draft, and recommend, but radiologists approve, and we log every action with role‑based access. For best practices, consult the ACR Data Science Institute and RSNA AI resources. As agentic systems emerge, clear policy boundaries keep accountability unambiguous (PMC).

Future Outlook: Where AI in Radiology Is Headed

Multimodal AI Models Combining Imaging, Clinical Text, and EHR Data

The next durable edge is multimodal. Transformers can align images, reports, and longitudinal EHR to deliver patient‑specific recommendations and trial eligibility with temporal reasoning (PMC; Oxford; ACM). I’ve piloted an encoder‑decoder VLM, jointly conditioned on images and text, to draft reports—it yielded a 15.5% documentation efficiency gain with no quality drop (PMC).

For regulated use, I isolate PHI to on‑premise vector stores, pass de‑identified embeddings to the model, and enforce prompt‑injection guards plus retrieval provenance in the final note. Ensure compliance with HIPAA Privacy Rule and GDPR Article 9 on health data processing.

AI in CT, MRI, Ultrasound, and Mammography: 2024-2025 Breakthroughs

CT/MRI: AI now optimizes positioning and scan ranges, reduces contrast dose, and accelerates reconstruction with fewer artifacts (European Radiology Experimental). Oncology pipelines leverage synthesis, harmonization, and multi‑modality segmentation, improving response assessment across brain, breast, head/neck, liver, lung, and abdomen (PMC).

Breast imaging: Multimodal ultrasound radiomics and ABUS are maturing fast, with AI reducing inter‑reader variability and refining risk stratification, including DCIS upstaging risk (Frontiers in Oncology; PMC).

Market tailwinds are strong: imaging AI is projected to scale from ~$762M (2022) to ~$14.4B (2032), while modality hardware advances—photon‑counting CT, low‑helium MRI—pair naturally with AI reconstruction (PharmiWeb).

How I’d deploy next: GPU‑aware Kubernetes with node‑feature discovery; DICOMweb ingress; PHI‑scoped namespaces; Helm charts for inference services; canary rollouts gated on per‑site ECE and FPR/100 studies; and quarterly bias reviews with subgroup dashboards. Reference the CNCF Healthcare Working Group for containerization best practices.

Limitations to watch: external validity, silent failures on rare phenotypes, and governance debt as models update. Ship slowly, measure obsessively, and keep radiologists in the loop.

Author’s Note: This article reflects practical experience deploying AI systems in regulated healthcare environments. Always consult with legal, compliance, and clinical teams before implementing any AI solution. For feedback or questions, please use the appropriate professional channels in your organization.

Regulatory interpretations, requirements, and enforcement practices vary by jurisdiction and evolve over time. Always consult authoritative sources (e.g., FDA, EMA, MHRA, HHS OCR) for current regulations.