Disclaimer: This article and any content provided by the chatbot are for informational purposes only and do not constitute medical advice, diagnosis, or treatment. Always consult a qualified healthcare professional for any medical concerns.

Medical chatbot projects live or die on safety, data governance, and measurable clinical utility, not demos. I’ve shipped and evaluated healthcare AI in HIPAA and GDPR environments, and my rule of thumb is simple: pick the right model for the job, scaffold it with guardrails, and prove value with pilot data before scaling. In this guide, I break down how I evaluate and deploy a medical chatbot end to end, from use cases and model selection to oversight, privacy, and continuous improvement.

Table of Contents

Understanding the Role of a Medical Chatbot

Key Use Cases: Triage, Patient Education, FAQs, and Appointment Scheduling

When I scope a medical chatbot, I start with four high-ROI tracks:

- Triage and symptom assessment: Chatbots can collect structured symptom data and route patients to appropriate care levels. Research shows chatbots can match physician diagnostic accuracy in roughly 70% of cases, and Babylon Health’s conversational triage improved digital-first access and reduced wait times. I harden these flows with decision trees plus LLM reasoning and log every triage recommendation for auditability.

- Patient education and FAQs: From CDC’s Coronavirus Self-Checker to Woebot for mental health, high-quality education is a safe on-ramp. I bind responses to vetted knowledge bases and guideline snippets. Chatbots can act as assistants sharing evidence-based info on conditions, treatments, and prevention, exactly where retrieval-augmented generation (RAG) shines.

- Appointment scheduling: Automating bookings, reschedules, and cancellations saves real money. Direct EHR/PM system integrations enable real-time availability, while administrative work consumes ~25% of US healthcare spend—automation offsets that.

- Operational FAQs (coverage, parking, directions) and medication reminders: Low-risk, high-volume tasks that free staff time.

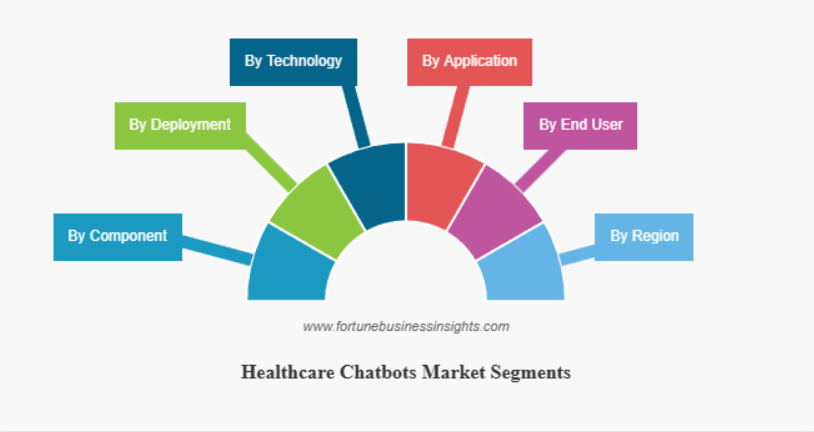

A quick business note: AI chatbots could save healthcare $3.6B globally by 2025, and as of April 2025, ~19% of medical groups use chatbots/virtual assistants, with physicians most positive on scheduling (78%), facility finding (76%), and medication info (71%). The global healthcare chatbots market is projected to grow from $1.98 billion in 2025 to $8.25 billion by 2032.

Identifying Target Users: Patients, Clinicians, and Healthcare Teams

I map user groups and success criteria early:

- Patients: 24/7 access to symptom checks, education, reminders, and scheduling when clinicians aren’t available. Social determinants intake via chat, piloted in EDs, can surface needs at scale. Success metrics: comprehension, task completion, and safe disposition.

- Clinicians: Drafting patient messages, structured triage notes, and education leaflets. Success: time saved, fewer back-and-forths, preserved clinical judgment.

- Care teams/ops: Eligibility, referrals, prior auth prompts, and capacity smoothing.

I document explicit handoff rules (e.g., chest pain → immediate escalation) and set channel boundaries (voice vs. web chat vs. portal).

Selecting the Best Healthcare AI Assistant

Comparing General LLMs vs. Medical-Specific Models

I don’t assume “bigger is better.” I benchmark models against the job:

- Medical-specific leaders: Google’s Med-PaLM 2 reached expert-level performance on USMLE-style questions, with physicians preferring its answers to physician-written ones on 8/9 axes. In 2025, Llama 3.1 405B performed on par with GPT-4 on complex medical cases—the first open model to match top proprietary systems. If you need explainability, on-prem control, and fine-tuning, strong open models are now viable.

- General LLMs: Great for language fluency and broad reasoning, but I always confine them with RAG, citations, and policy prompts for medical contexts.

For apples-to-apples comparisons, I use multi-task benchmarks like Stanford’s MedHELM (120+ scenarios across 22 task categories) and standardized leaderboards for tracking model progress.

Evaluating Medical Knowledge, Language Accuracy, and Compliance

Two truths can coexist: models can be surprisingly accurate, and still unsafe if poorly constrained. JAMA Network Open found chatbot answers to physician questions were predominantly accurate (median 5.5/6). Yet errors can have life-or-death consequences—accuracy must be measurable and monitored.

My evaluation checklist:

- Knowledge: USMLE-style QA, guideline-grounded cases, and institution-specific policies via RAG.

- Language: Readability targets (e.g., 6th–8th grade for patient-facing), bilingual checks where relevant.

- Safety: Red-team prompts (contraindications, rare edge cases), forced citation and refusal rules.

- Compliance: Data flow diagrams, PHI scoping, storage locations, BAAs, access controls. HIPAA/GDPR fit is non-negotiable and impacts architecture choices (on-prem vs. VPC vs. vendor API).

Ensuring Safety and Accuracy in Medical Chatbots

Managing Medical Content Errors and Providing Clear Disclaimers

I don’t ship without prominent disclaimers and escalation paths. That’s not academic: between 2022 and 2025, medical disclaimers in LLM outputs fell from 26.3% to under 1%, increasing the risk that users over-trust advice. I enforce sticky, context-aware disclaimers and make “This is not a diagnosis” part of the UX, not buried footnotes.

Content risks are real. BMJ Group highlights that chatbot answers to drug questions can be hard to read and may omit critical safety info, with readability scores implying college-level text—not acceptable for patients. Mount Sinai research showed models can elaborate on fabricated medical terms; simple prompt guardrails cut those errors almost in half. I combine prompt-level controls with post-generation validation and selective refusal.

Integrating Human Oversight and Verification Workflows

NCBI emphasizes that AI output must not be used in isolation—human analytical thinking remains essential. My approach:

- Human-in-the-loop (HITL): For triage above risk thresholds and any medication-specific advice, route to clinician review.

- Verification workflows: Validate data before EHR writes; use verified drug, interaction, and guideline databases; log provenance of every citation.

- Transparency: Clearly label AI-generated content, and train staff on hallucinations, bias, and when to override the bot.

I also run periodic safety councils with clinical leadership to review difficult transcripts and tune refusal policies.

Addressing Bias and Ensuring Equity

Healthcare AI bias stems from historical inequities in access, treatment, and data collection. Most datasets come from urban hospitals and wealthy countries, systematically excluding rural patients, ethnic minorities, and marginalized groups.

To mitigate bias, I ensure diverse data sources, multidisciplinary development teams, demographic monitoring of outcomes, and collaborative design with patient groups. This requires continuous auditing for concept drift and emerging biases.

Building Explainability and Transparency

Lack of AI explainability can shift decision-making power from patients and doctors to opaque algorithms. I implement algorithmic transparency through white-box models, visual explanations and natural language summaries, and interactive interfaces showing how the bot reached its conclusions.

Designing a Patient-Friendly Chatbot Experience

Effective Conversational Design and Tone for Healthcare Interactions

Good conversational design prevents most safety incidents. I encode:

- Memory and context: Maintain dialogue history, tolerate typos, and infer intent without being brittle.

- Tone: Professional, empathetic, and clear—no passive-aggressive phrasing or alarmist language.

- Flows: Map patient journeys (triage, refill, prep instructions), add explicit fallbacks for unresolved queries, and fast handoffs to humans.

For voice channels, generative AI voice agents can engage in fluid, contextual dialog that adapts to individual patient needs. For web/mobile, I add quick-reply chips and eligibility checklists.

Privacy, Data Security, and Regulatory Compliance Considerations

On PHI, I assume worst-case and architect backwards. Under HIPAA, AI vendors handling PHI become business associates, triggering Privacy and Security Rule obligations: encryption in transit/at rest, access controls, audit trails, and BAAs. In the EU, GDPR demands data minimization, anonymization where possible, transparency, and human review for impactful decisions.

I document:

- Data flow and residency (US/EU), KMS-backed encryption, and role-based access

- Consent and purpose limitation; PHI redaction for non-essential logs

- Automated compliance monitoring and periodic access reviews

If the vendor can’t sign a BAA or meet residency needs, I deploy on-prem or in a private VPC with egress controls.

Navigating FDA and State Regulations

As of 2025, the FDA is clarifying how regulation applies to medical devices based on large language models, particularly therapy chatbots. The FDA has authorized over 1,250 AI-enabled medical devices, though staffing constraints remain a challenge.

At the state level, Illinois enacted legislation prohibiting licensed mental health professionals from using AI chatbots as substitutes for direct patient communication. I stay current on evolving regulations and design for compliance from day one.

Testing, Deployment, and Continuous Improvement

Pilot Testing with Real Patients and Healthcare Staff

Before scaling, I run a contained pilot. Mass General Brigham’s return-to-work chatbot is a solid template: they mapped policy into a single flow diagram and built on Azure Health Bot, hitting 5,575 users in five weeks. I mirror that approach—agile sprints, shadow mode, and staged rollouts. I also A/B test copy, handoff triggers, and knowledge sources in production-like environments.

Validation must be holistic:

- Accuracy: Gold-standard answers reviewed by clinicians; spot-checks on rare cases

- Usability: Comprehension and trust scores; task success in low-bandwidth or mobile contexts

- Safety: Red-team suites (drug interactions, pediatric dosing), refusal correctness, escalation latency

- Equity: Performance by language, reading level, and device type

Real-world results are promising: Weill Cornell Medicine saw a 47% increase in digitally booked appointments, and Grewal Eye Institute’s WhatsApp bot achieved 675% ROI within 90 days.

Monitoring Performance, Collecting Feedback, and Iterating Safely

I instrument from day one using frameworks like the Health Care AI Chatbot Evaluation Framework (HAICEF)—a hierarchical structure covering safety, privacy, fairness, trustworthiness, and operational effectiveness.

Foundational metrics include emotional support and health literacy—how well the bot communicates understandably to lay users, assessed by both clinicians and patients. Operational KPIs: task completion, change in no-show rate, call volume reduction, and CSAT. For service quality, I track first-contact resolution, average resolution time, and self-service rate.

My continuous improvement loop:

- Log and label: Store prompts, responses, citations, safety events, and outcomes with PHI-safe pipelines

- Evaluate nightly: Regression tests on search stability, relevance, refusal accuracy, and sentiment

- Retrain/RAG refresh: Update guidelines, drug databases, and local policies on a set cadence; use canary releases

- Governance: Monthly review with compliance and clinical leaders; incident postmortems feed prompt and policy updates

One last thing: I keep disclaimers visible even after “success.” The MIT Tech Review finding on disappearing disclaimers is a reminder that safety is a product requirement, not a legal afterthought.

About the author: I’m Andy Chen. I cite official and peer-reviewed sources above; release details and findings reflect publications available through 2025. Pros: speed, access, measurable savings. Cons: hallucinations, readability gaps, integration overhead. With careful model selection, guardrails, and governance, the pros can safely win.

Disclaimer: The content on this website is for informational and educational purposes only and is intended to help readers understand AI technologies used in healthcare settings. It does not provide medical advice, diagnosis, treatment, or clinical guidance. Any medical decisions must be made by qualified healthcare professionals. AI models, tools, or workflows described here are assistive technologies, not substitutes for professional medical judgment. Deployment of any AI system in real clinical environments requires institutional approval, regulatory and legal review, data privacy compliance (e.g., HIPAA/GDPR), and oversight by licensed medical personnel. DR7.ai and its authors assume no responsibility for actions taken based on this content.