FHIR data sits at the center of every clinical AI project I ship. Not because it’s trendy, because it gives me a reliable, inspectable substrate for models that need to survive audits, PHI controls, and cranky integration tests. If you’ve ever wrestled free‑text notes into features (and watched your evaluation crumble under edge cases), you know why structured EHR data matters. In this piece, I’ll show how I use FHIR, what works, what breaks, and how to get production‑grade results without hallucinated ICD codes showing up in your output.

Table of Contents

Understanding FHIR: The Standard for Interoperable Healthcare Data

FHIR Basics and Its Role in Healthcare Interoperability

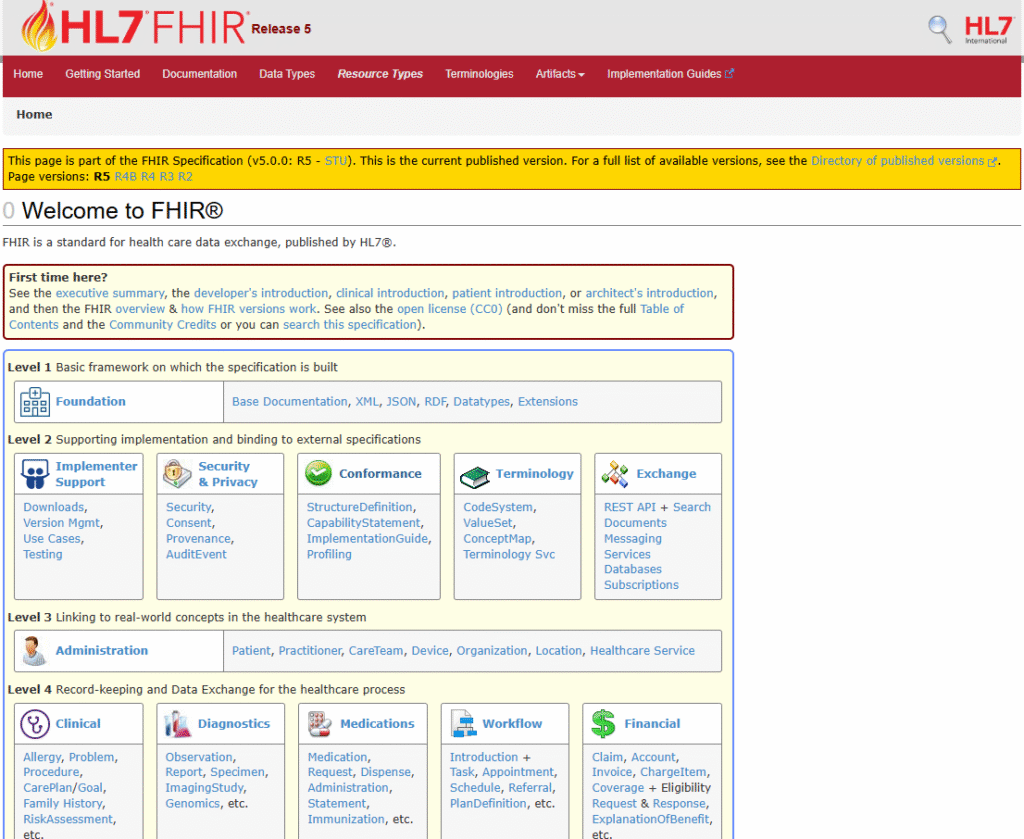

FHIR (Fast Healthcare Interoperability Resources) is a modular standard from HL7 that structures clinical data into typed resources, Patient, Observation, Condition, MedicationRequest, Encounter, and so on, linked by references and coded with terminologies like SNOMED CT, LOINC, and RxNorm. It’s widely supported across major EHRs and cloud services and is the backbone of SMART on FHIR apps. Today, most production integrations target FHIR R4/R4B: R5 exists with improvements, but many vendors’ write APIs still align to R4. See official docs.

FHIR helps because it enforces schema and vocabularies at the API boundary. I get predictable payloads, versioned profiles, and capability statements to negotiate features. That translates into less ad‑hoc mapping and more time training and evaluating models.

Why FHIR is Critical for Structured EHR Data and AI Applications

For AI, I need deterministic joins across patient, encounter, and time. FHIR’s resource model and search parameters (e.g., Observation?patient=…&date=ge2024-01-01) make this feasible. More importantly, coded elements (Observation.code, Condition.code) reduce label entropy, critical when benchmarking classification or forecasting tasks. Published studies consistently show that structured, standardized data improves comparability and portability of analytic models (see JAMIA on secondary use and standards and NIH discussions on interoperability and safety).

Structured vs Unstructured Healthcare Data

Advantages of Structured EHR Data for AI-Driven Healthcare Solutions

- Reproducibility: With FHIR, I can fix value sets and profiles, then pin training runs to a capability statement hash. That’s the difference between a paper result and a deployable model.

- Benchmarkable labels: Using LOINC for labs and SNOMED/ICD-10 for problems lets me compute exact-match and concept‑normalized metrics across sites.

- Safer inference: Structured inputs enable constrained decoding, models must emit valid codes or fail schema validation. This curbs hallucinations.

- Governance: RBAC at the FHIR server plus audit logs simplify HIPAA/GDPR compliance reviews compared to scraping notes from a data lake.

Limitations and Risks of Free-Text Clinical Notes

I still use notes for context, but they come with risk: PHI leakage, variability in phrasing, and brittle entity linking. Multiple studies flag documentation quality and variability as a threat to downstream model safety and equity. For LLMs, free text increases hallucination surfaces: I measure this by schema‑adherence rate and code‑validity precision. When I must use notes, I apply de‑identification (e.g., Microsoft Presidio or Philter), then constrain outputs to FHIR‑valid enumerations or perform post‑hoc code validation against terminology services.

Leveraging FHIR Data in Healthcare AI Models

Incorporating FHIR Resources into AI Model Training

My typical pipeline:

- Ingest: Pull via FHIR REST with _since and patient compartment searches. Example: Observation?patient=123&category=laboratory&date=ge2024-01-01.

- Normalize: Resolve references: expand codes using a terminology server (e.g., HAPI or Ontoserver) to unify synonyms and retired codes.

- Feature engineering: Convert Observations to time‑aligned panels (e.g., HbA1c, eGFR), roll up Conditions by system/ancestor concept, and derive episode windows from Encounters.

- Serialize: Write to Parquet with stable schemas. I keep both raw FHIR JSON and curated features for auditability.

- Validate: Run JSON Schema checks against R4 definitions and custom profiles: fail the build on unknown codes or unit mismatches.

For LLMs, I don’t feed raw notes first. I construct a structured context: a mini patient graph from FHIR (Conditions, Meds, Labs), then prompt models to reason over that, returning FHIR‑shaped JSON via function‑calling or JSON mode with a strict schema.

Real-World Examples of AI Using Structured Health Data

- Data harmonization: Northwestern’s 2024 work shows AI assisting mapping for health data interoperability across systems, underscoring the value of standardized inputs.

- CDS risk models: Training sepsis or readmission predictors on FHIR‑derived features yields cleaner external validation because code systems are explicit.

- Cohort retrieval: LLMs can translate natural language cohorts into FHIR search queries, then execute and self‑check against returned schema. I track hallucination by counting invalid FHIR parameters and non‑existent code systems.

- Research reuse: JAMIA reports emphasize standardized datasets improving secondary analyses and portability.

Tools and Platforms for Effective FHIR Integration

FHIR Libraries, APIs, and Developer Resources

- Servers/SDKs: HAPI FHIR (Java), Firely .NET SDK, SMART on FHIR tooling, Inferno for conformance testing.

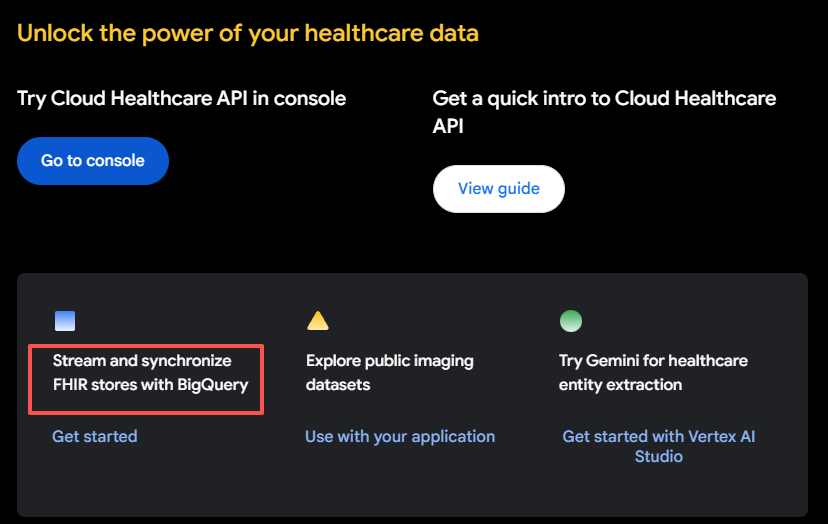

- Cloud: Google Cloud Healthcare API (FHIR stores + DICOM/HL7v2 bridges), Azure Health Data Services (managed FHIR, DICOM), AWS HealthLake (FHIR‑native analytics).

- Data generation/testing: Synthea for synthetic FHIR, FHIR Shorthand (FSH) + SUSHI for profile authoring.

- Terminology: HAPI Terminology, CSIRO Ontoserver.

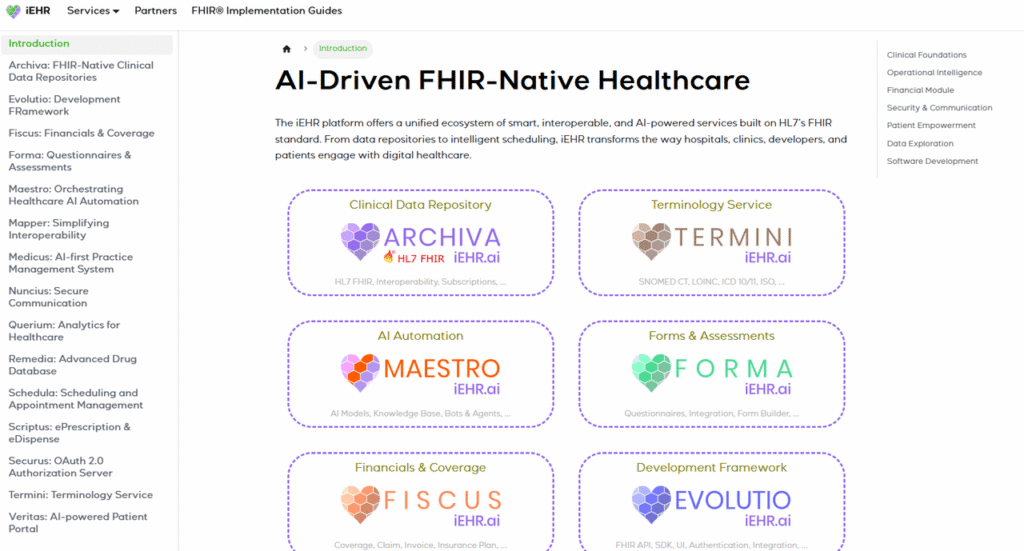

- Interop consulting/services: iehr.ai offers standards‑based integration services that can accelerate validation and deployment.

Quick curl to sanity‑check a server:

- Capability:

curl -s https://your-fhir-server/metadata | jq '.rest[0].resource[].type'- Patient labs since date:

curl -s "https://your-fhir-server/Observation?patient=123&category=laboratory&date=ge2024-01-01" | jq '.'Converting and Preparing FHIR Data for AI Workflows

- Flatten: Map Observation.value[x] to a typed column, normalize UCUM units (e.g., mmol/L to mg/dL when clinically acceptable) and keep provenance (Observation.id, effectiveDateTime).

- Windowing: Build encounter‑aligned snapshots and rolling features (e.g., last value, 7‑day delta, abnormal flags).

- Storage: Land curated features in Parquet: retain raw FHIR JSON in object storage for traceability.

- Contracts: Define a strict schema for model IO: for LLMs, enforce JSON schema with function‑calling and reject‑on‑invalid.

- Benchmarks: Track concept coverage, unit consistency rate, and code‑validity precision/recall before any modeling.

Challenges and Best Practices in Using FHIR Data

Ensuring Data Quality and Standardization

- Profile drift: Vendor FHIR profiles vary. Pin to specific Implementation Guides (IGs) and validate with Inferno or custom Schematron.

- Code chaos: Create a terminology mapping layer and document versioning. Fail ingestion on retired/unknown codes unless an explicit mapping exists.

- Temporal integrity: Many Observations lack precise times. Impute cautiously and flag derived timestamps to avoid leaking future info.

- OMOP vs FHIR: For analysis, OMOP’s tables can be simpler: I often transform FHIR→OMOP for modeling, then keep FHIR for serving and audit.

Privacy, Compliance, and Security Considerations with FHIR Data

- HIPAA/GDPR: Minimize fields: use purpose‑bound datasets. For EU, establish lawful basis and data minimization by design.

- Access controls: Use SMART Backend Services (JWT client credentials) and per‑resource scopes. Log all accesses.

- PHI handling: De‑identify notes before LLM ingestion: prefer structured FHIR features when possible.

- Hallucination controls: Constrain model outputs to whitelisted code systems and validate against terminology services. Measure: schema adherence, invalid‑code rate, and clinical consistency checks.

- Transparency: Document FHIR version (e.g., R4B), profile versions, and release dates of models and datasets so reviewers can reproduce results.

Balanced view: FHIR won’t fix missing data or biased coding. It does, but, give us the rails to measure, compare, and control risk in a way ad‑hoc CSVs never will.

Disclaimer: The content on this website is for informational and educational purposes only and is intended to help readers understand AI technologies used in healthcare settings. It does not provide medical advice, diagnosis, treatment, or clinical guidance. Any medical decisions must be made by qualified healthcare professionals. AI models, tools, or workflows described here are assistive technologies, not substitutes for professional medical judgment. Deployment of any AI system in real clinical environments requires institutional approval, regulatory and legal review, data privacy compliance (e.g., HIPAA/GDPR), and oversight by licensed medical personnel. DR7.ai and its authors assume no responsibility for actions taken based on this content.

Past Review: