Disclaimer:

The content on this website is for informational and educational purposes only and is intended to help readers understand AI technologies used in healthcare settings. It does not provide medical advice, diagnosis, treatment, or clinical guidance. Any medical decisions must be made by qualified healthcare professionals. AI models, tools, or workflows described here are assistive technologies, not substitutes for professional medical judgment. Deployment of any AI system in real clinical environments requires institutional approval, regulatory and legal review, data privacy compliance (e.g., HIPAA/GDPR), and oversight by licensed medical personnel. DR7.ai and its authors assume no responsibility for actions taken based on this content.

When I started wiring LLMs into clinical workflows, I realized very quickly that “just call the API” wasn’t going to cut it. Costs spiked in staging, PHI almost leaked in logs, and a seemingly minor model upgrade changed triage behavior overnight.

In this guide I’ll walk through how I now approach medical AI API integration, end to end, so you can plug OpenAI, Azure OpenAI, Google, or similar services into EHRs and health apps without getting burned on HIPAA, costs, or silent behavior changes. I’ll reference official docs and a few hard‑earned lessons from real deployments along the way.

Table of Contents

How to Choose the Right Medical AI API Service for Your Needs

A Comprehensive Comparison of Leading AI API Platforms (OpenAI, Google, AWS, Azure)

When I evaluate platforms for medical AI API integration, I start with four axes: compliance posture, deployment model, pricing, and ecosystem.

OpenAI (direct)

- Strengths: Fast access to newest models (GPT-4.x, o-series, etc.), strong tooling, good docs and pricing transparency.

- Limitations for PHI: As of November 2025, OpenAI has not yet signed HIPAA Business Associate Agreements (BAAs) with the vast majority of customers. Enterprise-grade controls and zero-retention commitments exist, but unless you have explicitly executed a BAA with OpenAI for the API service, you must not send any PHI. Always check the latest status directly on OpenAI’s Enterprise Privacy page or with your legal team before transmitting protected health information. For true HIPAA workloads I still route through Azure OpenAI or Google Vertex AI instead.

AzureOpenAI Service

Based on Microsoft’s official Azure OpenAI and Healthcare API docs, this is my default for US HIPAA workloads.

- Strengths:

- Can be deployed in Azure regions that co‑locate with other HIPAA‑eligible services.

- Microsoft will sign a BAA for covered services.

- VNet isolation, private endpoints, and Azure Monitor integrate cleanly with existing security programs.

- Trade‑offs: Slightly behind OpenAI direct on latest model availability and niche features.

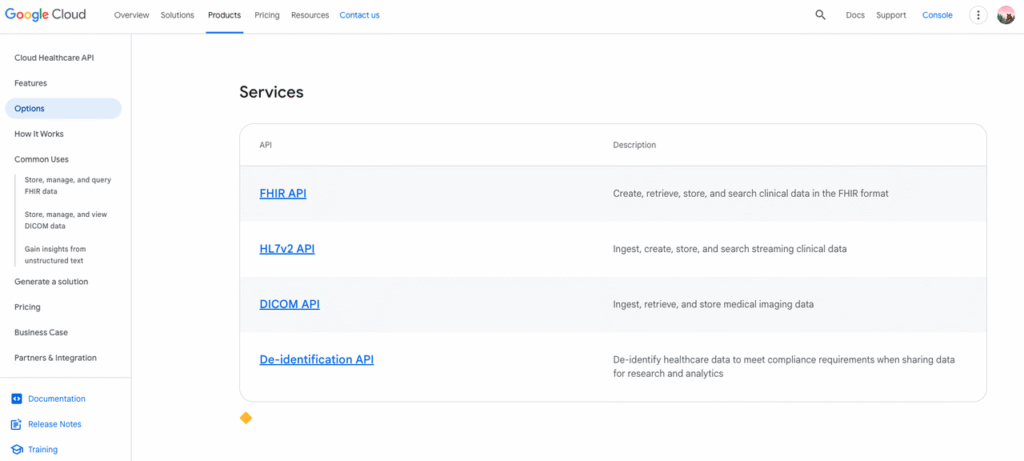

Google Cloud (Vertex AI + Healthcare API)

- Strengths: Tight coupling with Cloud Healthcare API (DICOM, HL7v2, FHIR), strong de‑identification tooling, and mature IAM.

- Trade‑offs: Model catalog is excellent but not always the first to ship new foundation models. You’ll often pair Vertex AI with the Healthcare API for structured data workflows.

AWS (Bedrock + HealthLake)

- Strengths: Deep enterprise adoption, flexible choice of models (Anthropic, Cohere, etc.), and integrations with Amazon HealthLake for clinical data.

- Trade‑offs: More moving parts: you often end up stitching multiple services for an equivalent experience.

My pattern: AzureOpenAI or Vertex AI when I need HIPAA‑aligned infrastructure now: OpenAI direct and other vendors for de‑identified R&D or non‑PHI workflows.

Key Considerations for Medical AI API Integration: Model Options, Cost, and Compliance

When I do technical due diligence, I literally keep a three‑column checklist: model, money, medical risk.

- Model options

- Purpose: triage, summarization, coding, patient‑facing chat, clinical decision support (CDS).

- Guardrails: Does the platform support tools/function calling, system messages, and safety policies that you can tune for medical use?

- Benchmarks: I look for any published MedQA, MedMCQA, or clinical note summarization evaluations: if none exist, I run my own.

- Cost modeling

- Start from public pricing tables (OpenAI, Azure, Vertex, Bedrock).

- Estimate per‑request tokens: I often log a week of real prompts/responses in staging and compute a p95 token count.

- Multiply by expected daily request volume and add a 20–30% headroom for prompt growth.

- For high‑volume endpoints, I sometimes split traffic: expensive models for high‑risk cases, cheaper models for routine tasks. You can also review LLM API pricing comparisons to optimize your budget.

- Compliance and data handling

- BAA / DPA: Don’t ship PHI until there’s a signed BAA (US) or DPA (EU) covering the AI service.

- PHI scope: Decide what must be PHI and aggressively de‑identify everything else. Many use cases work with problem lists, meds, and age ranges instead of raw notes.

- Logging: Disable verbose logs that include full prompts or responses: or run your own gateway that redacts PHI before logs are written.

If I can’t get clear answers on these three from a vendor, I treat them as R&D‑only, no‑PHI tools.

Ensuring Robust Authentication and Security for Medical AI APIs

Securely Handling API Keys and Tokens in Medical AI Integration

The biggest security mistake I still see is AI keys baked into mobile apps or front‑end JavaScript. For medical data, that’s a non‑starter.

What I do instead:

- Backend‑only secret use: API keys (OpenAI, Azure, Google, AWS) live only in backend services, never on the client.

- Managed secret stores: Use Azure Key Vault, AWS Secrets Manager, or Google Secret Manager. Keys are rotated automatically and never checked into Git.

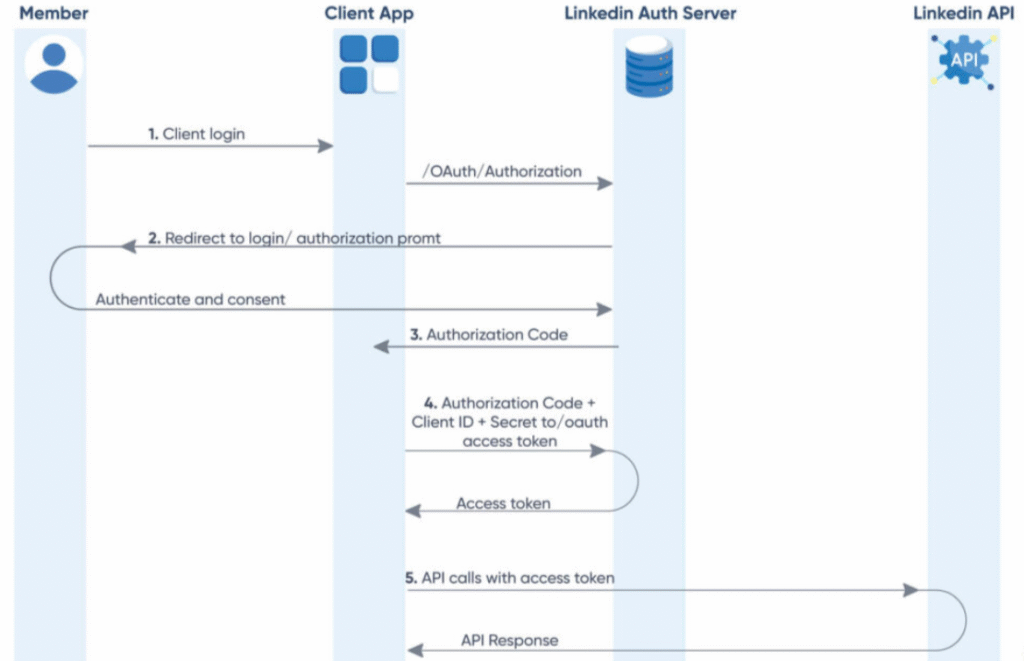

- Short‑lived access tokens: When the platform supports OAuth 2.0 or workload identity, I prefer that over raw static keys. The same OAuth 2.0 patterns used for EHR authentication apply here.

For mobile/web apps, the client authenticates to my backend (via OAuth/OpenID, mTLS, or session tokens). My backend then calls the AI service on their behalf. Microsoft provides comprehensive guidance on authentication and authorization for Healthcare APIs.

Achieving HIPAA Compliance in Medical AI API Usage

HIPAA compliance is less about a magic checkbox and more about how you use the API.

From reviews of HIPAA and AI guidance and healthcare security best practices, my core rules are:

1. Business Associate Agreement (BAA):

- Use only cloud services listed as HIPAA‑eligible and covered by a BAA.

- If the AI platform can’t offer a BAA, treat it as non‑PHI only. Resources like HIPAA-compliant AI API guides can help you navigate vendor options.

2. Minimum necessary PHI: Before each integration, I literally list every field sent to the model and ask: Can I do this with a de‑identified or pseudonymized version instead?

3. Data residency and retention:

- Pin workloads to allowed regions.

- Disable vendor data retention or training on your data where supported.

- Maintain your own retention schedule for prompts/responses.

4. Auditability: Log who invoked which prompt template and which model version responded, but avoid logging raw PHI when possible. This is crucial when auditors ask, “Why did the model say this?” For deeper insights, review HIPAA compliance and AI security requirements in 2025 and learn how to build HIPAA-compliant AI applications.

Efficient Medical AI API Workflow: A Step-by-Step Guide

Sending Medical Data Requests to AI APIs: A Practical Example

I usually standardize on a simple pattern: structured clinical context + explicit task instructions + safety rails.

Example (Node/TypeScript, Azure OpenAI, de‑identified data):

javascript

const systemPrompt =`You are a medical summarization assistant.

- Summarize in 3–5 bullet points.

- Do not invent diagnoses.

- If information is missing, say "insufficient data".`;

const userPrompt =`Patient: 54-year-old male with HTN and T2DM.

Recent note: ${redactedNoteText}`;

const response =await client.getChatCompletions(

deploymentName,

[{ role:"system", content: systemPrompt },

{ role:"user", content: userPrompt }],

{ temperature:0.1, maxTokens:256 }

);Key details I’ve learned to always set:

- Low temperature (0–0.2) for clinical tasks to reduce hallucinations.

- Max tokens capped to control both cost and rambling responses.

- A system prompt that bans diagnosis and forces uncertainty when the context is thin.

Parsing AI Responses: Handling Results from Medical AI Models

I avoid letting free‑text seep directly into downstream logic. Two patterns work well:

1. Constrained JSON outputs Ask the model to respond with JSON and validate it server‑side:

json

{

"summary":"...",

"warnings": ["insufficient data", "possible med interaction"]

}I then run a JSON schema validator. If parsing fails, I treat it as an error and fall back to a simpler pathway.

2. Never fully autonomous For anything that could influence diagnosis, prescribing, or triage, I route outputs into a clinician review UI. The model proposes: the human disposes.

I also tag each response with the model ID and version so, when a vendor upgrades models, I can correlate behavior shifts back to that change.

Seamlessly Integrating Medical AI APIs into Healthcare Applications

Integrating Medical AI APIs with EHR Systems and Mobile Apps

In practice, I see two common integration points:

1. EHR integration (Epic, Cerner, etc.)

- Use SMART-on-FHIR or native app frameworks to embed your UI in the EHR.

- Pull only the FHIR resources you need (e.g., Encounter, Observation, MedicationRequest) as input to the AI.

- Never let the EHR call the AI vendor directly: route through your backend, where you can enforce prompts, redaction, and logging.

Learn more about how EHRs achieve interoperability, integrating health apps with Epic EHR, and implementing FHIR with Epic, Cerner, and other platforms.

2. Patient and clinician mobile apps

- Mobile client → your API gateway → AI service.

- Use the same OAuth 2.0 patterns you already use for EHR and identity providers.

- For patient‑facing features, I add extra disclaimers and keep model behavior ultra‑conservative.

Implementing Effective Error Handling and Fallback Strategies

API failures in healthcare are not theoretical, I’ve seen rate limits spike right in the middle of clinic hours.

My baseline error‑handling plan, informed partly by API error handling best practices and real outages:

- Categorize errors: network, timeout, rate limit, validation, and safety/policy blocks.

- Retry with backoff for transient issues (network, 429s), but cap retries to preserve latency budgets.

- Graceful degradation:

- Fall back to cached summaries or simpler rules‑based tools.

- Never block the clinical workflow just because the AI is down.

- User messaging: Be explicit: “AI summarization temporarily unavailable: you can still view the raw note.”

Most importantly, I log enough context (sans PHI) to reproduce issues and refine prompts or routing logic.

Comprehensive Testing and Monitoring for Medical AI API Integrations

Testing Accuracy and Performance in Medical AI API Integrations

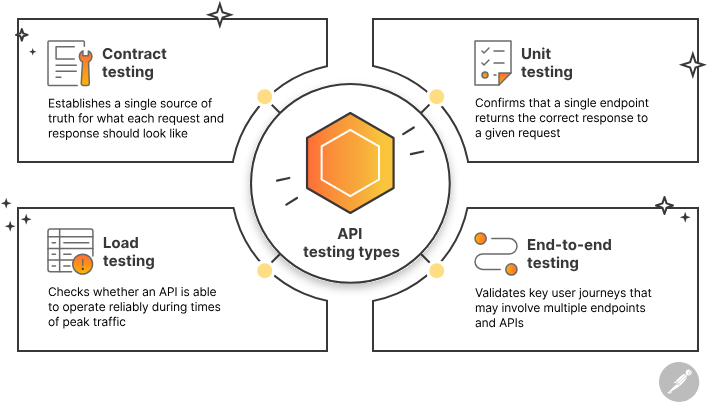

Before I let clinicians anywhere near a new AI feature, I run three layers of testing:

1. Unit and contract tests: Validate that requests are well‑formed and responses conform to JSON schemas.

2. Scenario regression sets: A fixed corpus of de‑identified or synthetic charts with expected outputs (or at least expected bounds). I re‑run these anytime I change prompts or models.

3. Hallucination checks: I score responses for unsupported assertions, either manually with SMEs or using secondary heuristics. If hallucination rates creep up, I freeze the rollout.

Load tests are also non‑negotiable: I simulate peak clinic volumes to understand latency and p95/p99 behavior. You can leverage API testing tools to automate these workflows.

Continuous Monitoring of API Changes and Updates for Medical Use Cases

Vendors silently improving models is good for consumer apps and dangerous for regulated care.

What I do now:

- Pin versions where possible (model IDs or deployments) and treat any change as a release event.

- Shadow testing: When a vendor ships a new model, I run it in parallel on a sample of traffic and compare outputs before switching.

- Operational dashboards: Latency, error rates, cost per 1k requests, and model mix, sliced by feature.

- Governance loop: A standing review with clinical and security stakeholders to evaluate new models, updated guidance, and incident reports.

If you build these habits into your medical AI API integration from day one, you’ll spend far less time firefighting and far more time proving, with data, that your AI features are safe, reliable, and worth keeping.

Frequently Asked Questions About Medical AI API Integration

What is medical AI API integration and why is it different from regular API integration?

Medical AI API integration is the process of wiring LLMs or AI services into EHRs and health apps in a HIPAA‑aligned way. Unlike typical integrations, you must manage PHI scope, BAAs, de‑identification, logging, model behavior shifts, and clinical risk, not just connectivity and latency.

How do I choose the right platform for medical AI API integration (OpenAI, Azure, Google, AWS)?

Compare platforms on compliance posture, deployment model, pricing, and ecosystem. Many teams use Azure OpenAI or Google Vertex AI with Healthcare API for HIPAA workloads, and OpenAI direct or other vendors for de‑identified R&D. Also evaluate guardrails, model benchmarks, and regional data residency options before sending any PHI.

How can I keep medical AI API integrations HIPAA compliant?

Use only HIPAA‑eligible cloud services with a signed BAA, minimize PHI fields sent to the model, and de‑identify whenever possible. Pin workloads to approved regions, disable vendor data retention or training on your data, and log who used which prompt and model version without storing raw PHI in logs.

What is the best way to secure API keys and tokens in medical AI integrations?

Never expose AI keys in mobile apps or front‑end code. Store keys only in backend services, using managed secret stores like Azure Key Vault, AWS Secrets Manager, or Google Secret Manager. Prefer short‑lived access tokens or workload identities, and let clients call your backend, which then calls the AI service.

Should I fine-tune my own medical model or rely on hosted AI APIs?

For most teams, starting with hosted medical AI API integration is faster and safer, leveraging vendor security, uptime, and model quality. Consider custom models only when you hit clear limits—such as specialty language, performance requirements, or strict on‑prem constraints—and ensure you can support training, validation, and monitoring at scale.

Past Review: