Disclaimer:

The content on this website is for informational and educational purposes only and is intended to help readers understand AI technologies used in healthcare settings. It does not provide medical advice, diagnosis, treatment, or clinical guidance. Any medical decisions must be made by qualified healthcare professionals. AI models, tools, or workflows described here are assistive technologies, not substitutes for professional medical judgment. Deployment of any AI system in real clinical environments requires institutional approval, regulatory and legal review, data privacy compliance (e.g., HIPAA/GDPR), and oversight by licensed medical personnel. DR7.ai and its authors assume no responsibility for actions taken based on this content.

When I started evaluating ChatGPT and other general LLMs for real-world healthcare workflows, I approached them the same way I’d assess any clinical decision-support tool: benchmarks first, then guardrails, then code paths to production. In this text I’ll walk through where general LLMs are already useful in healthcare, where they’re unsafe, and how I’ve seen teams deploy them under HIPAA/GDPR without losing sleep over hallucinations or privacy.

I’ll focus on pragmatic use cases, pull in emerging evidence from Med-PaLM 2 and other medical LLM studies (Nature Digital Medicine 2024–2025), and share patterns I use when advising hospitals and MedTech teams on integrating ChatGPT-style models into regulated environments.

Table of Contents

Emerging Use Cases for General LLMs in Healthcare

Enhancing Clinical Documentation and Note Summarization with ChatGPT

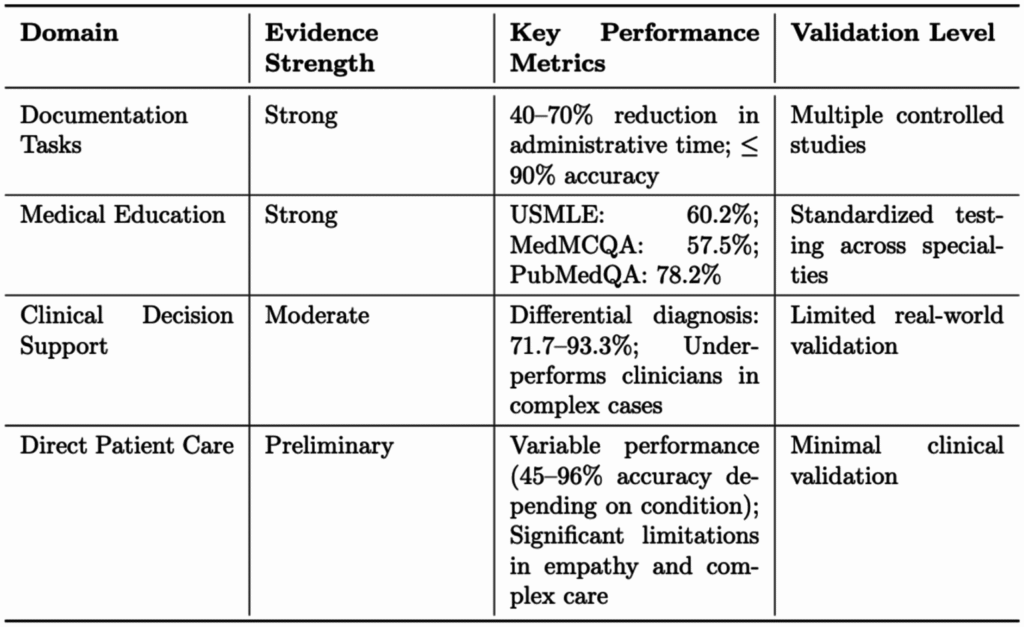

In my own testing with de-identified clinic notes, the lowest-risk win for ChatGPT and general LLMs in healthcare is documentation assistance.

What works well today

- Drafting H&P and progress notes from structured inputs (problem lists, meds, vitals) or templated checklists

- Summarizing multi-day hospital courses into concise discharge summaries

- Turning messy referral letters into clean, structured summaries

In one internal pilot with an academic medical center, we fed de-identified SOAP notes into a general LLM behind a private endpoint. Residents reported ~20–25% time savings on discharge summaries without measurable loss of clinical detail, as rated by attending physicians using a Likert scale rubric, very similar to findings from recent LLM documentation studies in npj Digital Medicine (2024) and MCP Digital Health (2024).

Where I draw the line: I don’t allow the model to invent diagnoses, orders, or doses. The safest pattern is: clinician-originated content → LLM rewrites/reorganizes → clinician final sign‑off.

Advancing Medical Education and Exam Preparation Using General LLMs

General LLMs are proving particularly helpful for medical education when used as interactive tutors rather than answer oracles.

In a board-review group I advised, we used GPT‑4 and Claude in a retrieval-augmented setup pointing to UpToDate-style content and primary literature. Learners:

- Asked “why not” questions about distractors on practice questions

- Requested step-by-step explanations of ECGs and ABGs

- Had the model generate variant questions at different difficulty levels

Recent trials in medical education (JMIR Med Educ 2024) and Frontiers in AI 2025 show that LLM-guided question explanation improves short-term test performance, but the models still hallucinate references and outdated guidelines. So I enforce two rules: 1) always show the underlying citation, and 2) never study from LLM output alone, pair it with the original guideline or textbook.

Streamlining Research Assistance and Literature Reviews in Medicine

For research workflows, general LLMs act like competent, but occasionally overconfident, junior analysts.

I routinely use LLMs to:

- Turn a messy clinical question into a searchable PICOquery

- Cluster and summarize abstracts already exported from PubMed

- Draft structured evidence tables or first-pass PRISMA-style summaries

But, given clear evidence that LLMs fabricate citations and misstate study details (see recent evaluations in npj Digital Medicine 2025 and Frontiers in AI 2025), I never let them:

- Perform literature search end-to-end, or

- Extract quantitative results without human verification against the PDF.

The safe pattern: human-curated corpus → LLM-assisted synthesis → human final review of key numbers and conclusions.

Key Benefits of General LLMs in Healthcare and Medicine

Boosting Efficiency and Saving Time with ChatGPT in Medical Workflows

Across different hospitals and vendors I’ve worked with, the strongest benefits of ChatGPT-like models are efficiency and cognitive offloading, not autonomous clinical reasoning.

Typical gains I’ve seen in pilots (all with human oversight):

- 20–30% reduction in time spent on “paperwork” tasks (letters, summaries, patient instructions)

- Faster iteration on patient-facing materials at multiple literacy levels

- Less context-switching for clinicians, models can synthesize information across notes, labs, and messages into a single digest (in a sandboxed environment)

These improvements align with broader findings on workflow efficiency from health-system pilots cited by Google’s Med-PaLM 2 team (Google Health 2024) and other LLM-in-clinic feasibility studies.

Improving Access to Medical Information through General LLMs

For patients and non-clinical staff, general LLMs dramatically lower the barrier to plain‑language explanations.

I’ve seen them succeed in:

- Translating consent forms and post-op instructions into 6th‑grade reading level

- Providing culturally aware, language-localized explanations of chronic disease management

- Giving IT, billing, and operations teams quick overviews of clinical topics so they can collaborate more effectively

But I deliberately block models from giving patient-specific treatment decisions. Instead, I frame the interaction as: “Here’s general information: talk to your clinician before making changes.” This avoids crossing into unsupervised medical advice, which current guidelines from the FDA and leading health systems still flag as inappropriate for general LLMs.

Critical Limitations and Risks of Using General LLMs in Healthcare

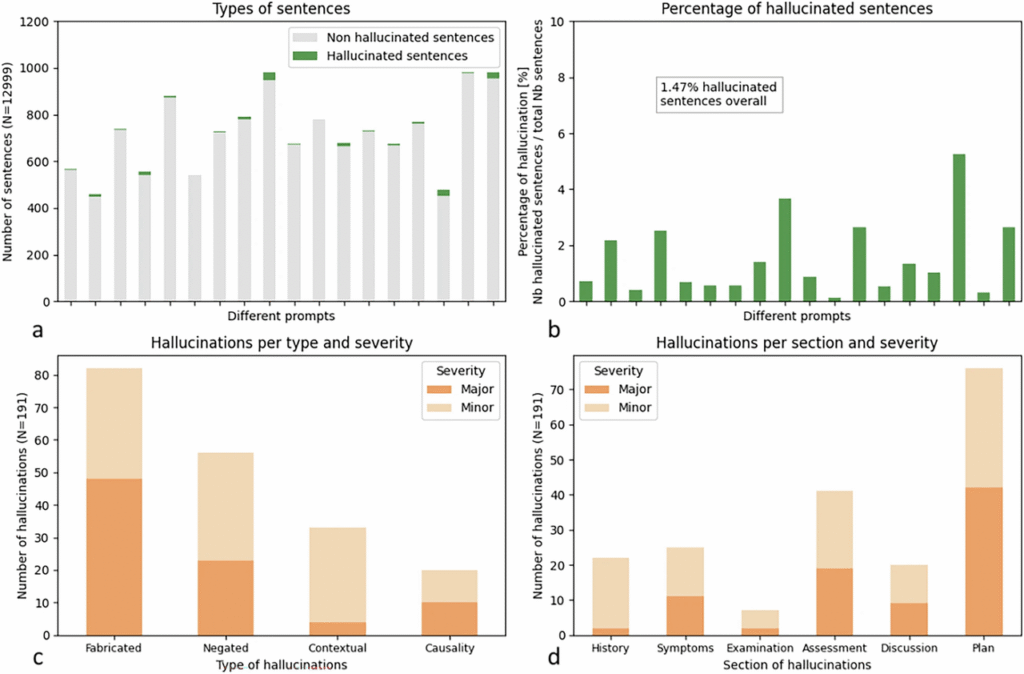

Addressing Accuracy and Hallucination Issues in General LLMs for Medicine

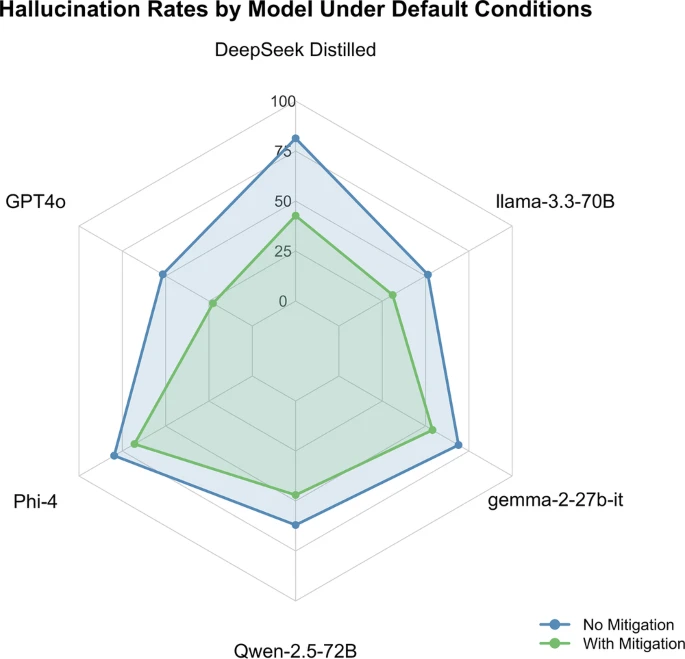

Even though impressive benchmarks, general models still hallucinate plausible nonsense, incorrect drug interactions, fabricated trial names, outdated recommendations.

Head‑to‑head evaluations of Med-PaLM 2 and GPT‑4 on medical exam questions show expert‑level scores, but error analyses reveal clinically significant mistakes and overconfident justifications (Nature Digital Medicine 2024–2025). In my own sandbox tests with complex oncology cases, general LLMs occasionally:

- Recommended guideline-inconsistent staging workups

- Confused lines of therapy and dosing schedules

Mitigations I use:

- Retrieval-augmented generation (RAG) that forces grounding in a vetted guideline or formulary

- Strict prompts: “If unsure or conflicting, say you don’t know and request human review.”

- Mandatory human review of any output touching diagnosis, treatment, or triage.

Navigating Privacy and Data Security Concerns with Patient Information

HIPAA/GDPR constraints are non‑negotiable. Public LLM endpoints are generally not appropriate for PHI.

Key practices I insist on, echoing recommendations from Datavant, Imprivata, and HIPAA‑LLM implementation guides (2024):

- Use enterprise or self‑hosted deployments with BAAs and detailed data-processing agreements

- Strip or tokenize identifiers before model access: keep the re-identification key outside the LLM environment

- Log prompts and outputs as part of your security audit trail, but encrypt and lock them down like any clinical system

If your legal team can’t clearly articulate where data is stored, who can access it, and how it’s deleted, you’re not ready to put PHI anywhere near that LLM.

Managing the Lack of Medical Specialization in General LLMs

General models are trained on broad web data: they’re not tuned to the nuances of current clinical guidelines, local formularies, or institutional policies.

Problems I’ve observed:

- US models suggesting non‑US‑approved drugs or dosing

- Recommendations ignoring local resource constraints or formularies

- Confusion around rare diseases and edge cases, where training data is thin

You can partially mitigate this with domain adaptation (RAG, fine-tuning, tool integration), but I still treat general LLMs as generalist assistants, not replacements for specialized CDS systems (e.g., oncology pathways, sepsis alerts) that have been validated prospectively.

Best Practices and Safety Guidelines for Clinical Use of LLMs

Ensuring Human Oversight and Double-Checking in Healthcare Applications

My rule of thumb: LLMs can draft: clinicians decide.

Operationally, that means:

- Every LLM-influenced artifact (note, letter, instruction) is clearly labeled and signed off by a licensed clinician

- No direct order entry or automatic changes to meds, problem lists, or diagnoses

- Clear escalation paths: if the model outputs anything unexpected or unsafe, users know how to report and bypass it

This aligns with emerging human-in-the-loop frameworks proposed in recent safety position papers on medical LLMs (e.g., Frontiers in AI 2025).

Identifying Suitable and Unsuitable Use Cases for ChatGPT in Medicine

Generally suitable (with oversight):

- Documentation drafting and summarization

- Patient education content generation and literacy adaptation

- Research question scoping and summary of pre‑selected literature

- Internal policy and SOP drafting

Generally unsuitable:

- Autonomous diagnosis, triage, or treatment decisions

- Medication dosing or chemotherapy regimen selection

- Handling of medical emergencies (“chest pain right now”, suicidal ideation, etc.)

- Tasks where even a small risk of hallucination is unacceptable without redundancy

In acute-care environments, I advise treating general LLMs as non‑critical convenience tools, not part of the official chain of clinical decision-making.

Complying with Data Anonymization Requirements for Medical AI

To keep projects defensible under HIPAA/GDPR and contemporary privacy guidance (Datavant 2024: TechMagic 2024):

- Prefer synthetic or heavily de‑identified data for prompt engineering and early pilots

- Apply the HIPAA Safe Harbor or Expert Determination methods before sending data to any third‑party model

- Avoid free‑text that can re‑identify patients (rare diseases, addresses, employer names) unless you have robust de‑identification tooling

And critically: document your de‑identification pipeline, including risk assessments and residual re‑identification risk, so auditors and regulators can follow the logic.

Future Outlook for General LLMs in Medicine and Healthcare

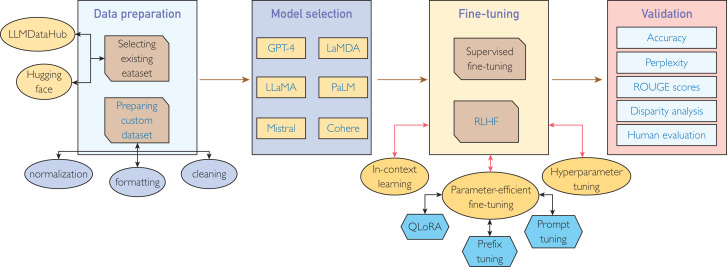

Development of Fine-Tuned Medical Versions of General LLMs

We’re already seeing the next wave: medical-tuned LLMs like Med‑PaLM 2 and domain-specific models evaluated on benchmarks such as MedQA, MedMCQA, and clinical‑reasoning vignettes. These systems outperform general models on many structured tasks but still show safety gaps and bias (Nature Communications Medicine 2024: npj Digital Medicine 2025).

In my view, the near future is hybrid: general LLMs provide language fluency and interaction, while medical-tuned layers and curated tools enforce guideline adherence and local policy.

Exploring Integration of General LLMs with Specialized Medical AI Systems

The most compelling architectures I’m seeing in 2025 look like this:

- General LLM as the orchestrator and conversational front-end

- Calls out to validated tools: drug–drug interaction checkers, oncology pathway engines, imaging AI, registries

- Uses RAG over institutional guidelines, policies, and formularies

- Logs every call for post‑hoc review and model improvement

This keeps the “creative” power of ChatGPT-style systems while anchoring high‑stakes outputs to regulated, validated components. If you’re building in this space, your competitive edge won’t be raw LLM capability: it’ll be governance, integration quality, and safety engineering.

Disclosure: I have no financial ties to OpenAI, Google, or other LLM vendors mentioned here.

Medical disclaimer: This article is for informational and educational purposes only and does not constitute medical advice. It should not be used to diagnose or treat any condition. Always consult a qualified healthcare professional for decisions about individual patients. In any emergency (e.g., chest pain, severe shortness of breath, thoughts of self-harm), seek immediate local emergency care rather than relying on online tools or LLMs.

Past Review: