⚠️ WARNING: This post reflects only the author’s individual, unvalidated practices in research/prototype environments. None of the methods have prospective clinical validation, IRB approval, or regulatory clearance (FDA/CE/NMPA etc.). Do NOT use any technique described here in real patient care or regulatory submissions without independent validation and approval.

Explainable AI isn’t a feel‑good add‑on in healthcare, it’s operational risk control. When I evaluate models for HIPAA/GDPR‑bound deployments, an explanation must do two jobs: help a clinician judge whether to trust a prediction right now, and give my team an audit trail we can defend to regulators later. In this piece, I’ll share what’s worked in my testing (from SHAP to heatmaps to LLM attribution), where explanations fail, and how I balance accuracy with interpretability without torpedoing model performance.

Table of Contents

Why Explainable AI Matters in Healthcare

How Explainability Impacts Clinical Decision-Making and Trust

In my pilots with ICU risk models, clinicians rarely ask for the full algorithm: they want to know, “Why this patient, why now?” Explanations that localize to chart features (e.g., rising lactate, MAP trending down, recent vasopressor start) help them reconcile model output with clinical context. Prospective evaluations show that explanations can calibrate trust, too vague and users ignore alerts: too confident and they over‑rely (see discussions on clinical decision support safety in Critical Care, 2024 and perspectives in Nature Digital Medicine, 2025).

Two practical rules I’ve learned:

- Specific beats generic: feature‑level attributions tied to timestamps and source systems win over broad labels like “vitals abnormal.”

- Stability matters: if a slight data tweak flips the explanation, the model’s credibility falls fast (echoed in recent reproducibility work in medical AI, e.g., Nature Communications 2025).

Regulatory and Ethical Drivers for XAI in Medical Practice

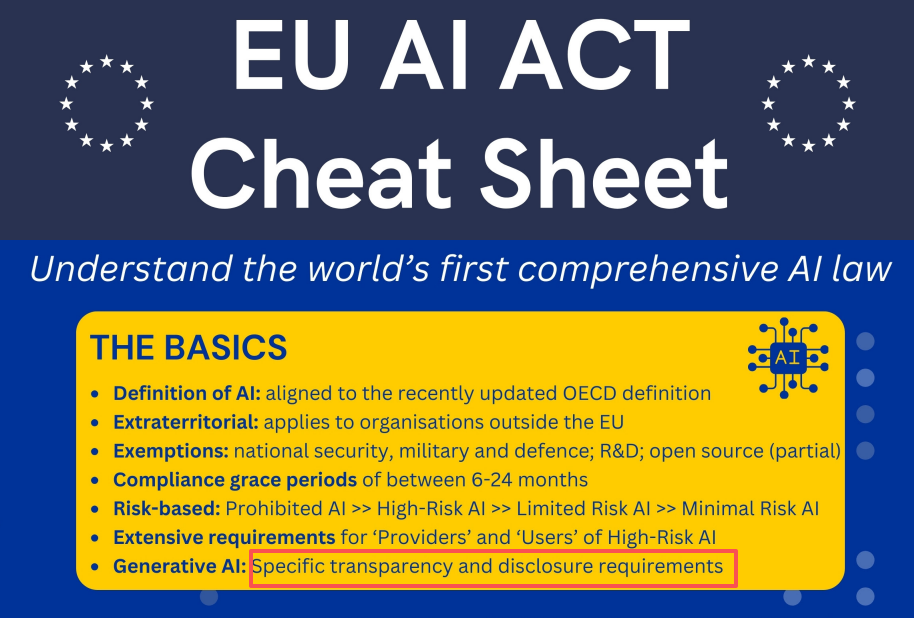

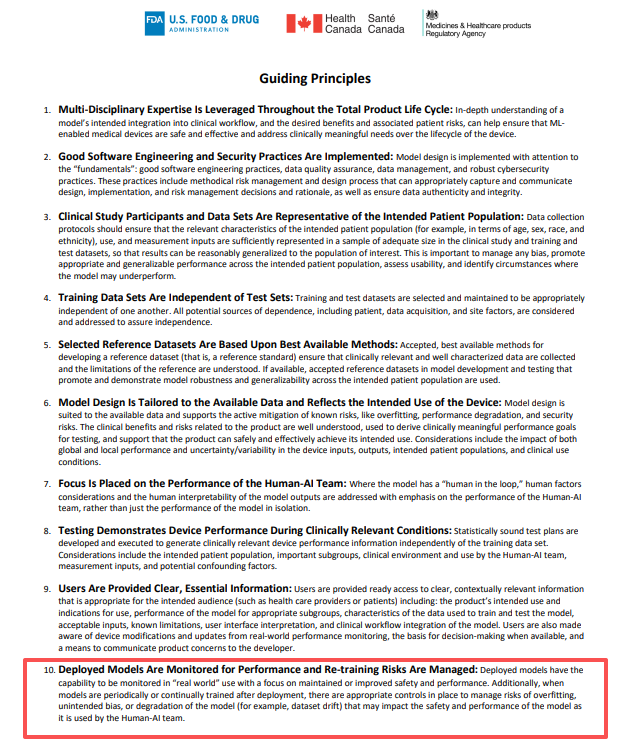

Explainability maps to concrete obligations. The EU AI Act (finalized 2024–2025) emphasizes transparency and human oversight for high‑risk systems. Under HIPAA/GDPR, traceability and data‑minimization are table stakes: explanation artifacts help justify processing and shared decision‑making. FDA’s GMLP, IEC 62304, and ISO 14971 expect risk controls, including human factors and post‑market surveillance, explanations become part of your risk file and usability evidence. Recent reviews (e.g., BMC Medical Informatics and Decision Making 2025: Wiley WIREs 2024) detail how XAI supports safety cases without mandating a specific technique, your documentation quality often matters more than the algorithmic flavor.

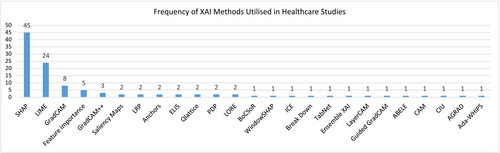

Approaches and Techniques in Explainable AI (XAI) for Medicine

Interpretable Models vs Post-Hoc Explanations

I start with interpretable baselines, logistic regression with splines, GAMs, and sparse decision rules, because they’re debuggable and fast to validate. In tabular EHR tasks, a tuned GAM with monotonic constraints often matches early gradient boosting runs while yielding transparent, clinician‑legible effects. When deep models win (imaging, multimodal fusion), I add post‑hoc tools but keep an interpretable challenger to guard against silent failure.

Considerations I apply before choosing:

- Data regime: sparse or biased labels favor interpretable models: rich, high‑dimensional signals often justify complex models.

- Accountability: if a decision can’t be reversed (e.g., device dosage), I bias toward native interpretability and tight uncertainty bounds.

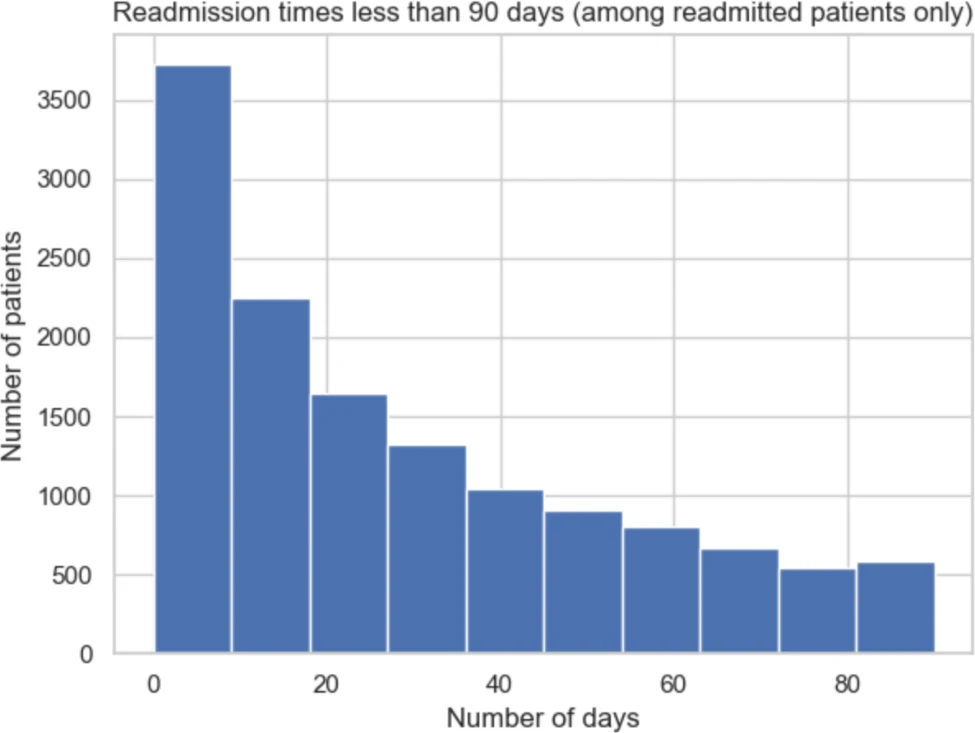

Practical Examples: LIME and SHAP for Medical Data Analysis

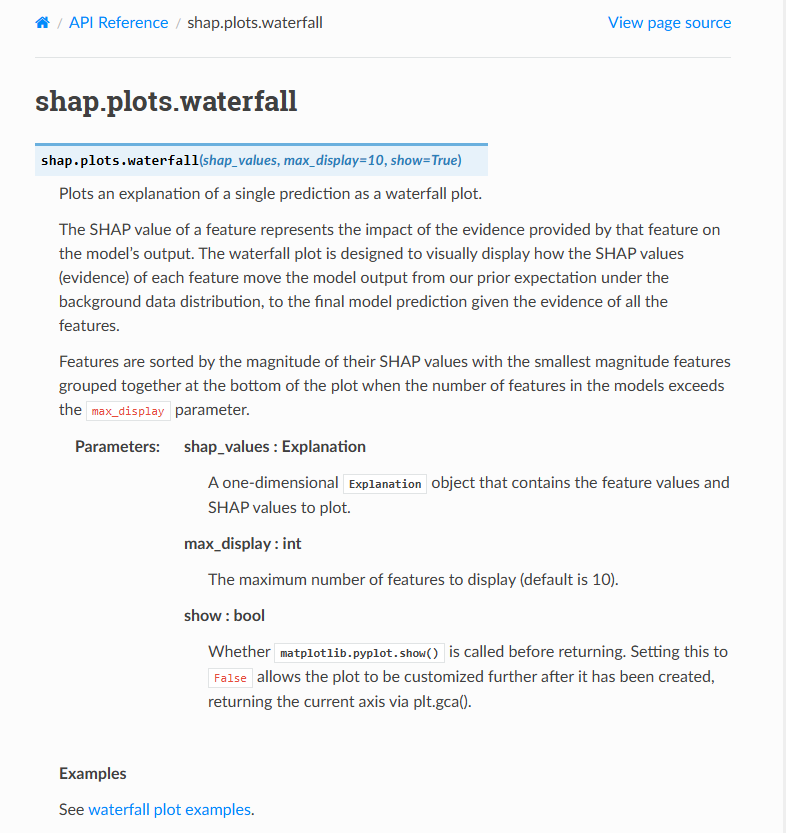

In my prototypes I currently default to SHAP because it’s additive and locally faithful for tree ensembles. LIME is quick for sanity checks but can be unstable with correlated features.

How I carry out SHAP safely:

- Background set: I use a clinically representative, de‑identified cohort (stratified by unit and shift) to compute conditional expectations, choice of background can swing attributions wildly (document it.).

- Leakage guard: I drop post‑admission features when explaining triage models: attributions must reflect data available at decision time.

- Aggregation: I report both local (per‑patient) and cohort‑level SHAP summaries to detect global drift.

For teams wanting a walkthrough, the step‑by‑step SHAP tutorial for healthcare with visuals is a solid primer, and recent peer‑reviewed evaluations (e.g., BMC 2020: Scientific Reports 2025) discuss stability and pitfalls in clinical settings.

Explainable AI in Medical Imaging

Visual Explanation Methods: Heatmaps and Attention Maps

For CNNs and ViTs, I use Grad‑CAM/Grad‑CAM++ to highlight salient regions and attention rollout for transformer interpretability. I always run sanity checks: randomizing weights should destroy the heatmap: if it doesn’t, you’ve got a placebo explanation. Importantly, saliency isn’t localization, clinicians can mistake a bright spot for a lesion when it’s really a confounder (positioning markers, devices). Recent analyses in Frontiers in Medical Technology (2025) and Scientific Reports (2025) underscore these failure modes.

Case Studies Demonstrating XAI in Diagnostic Imaging

In a chest X‑ray pneumonia experiment, heatmaps that consistently covered lower lobes correlated with true positives, but false positives lit up EKG leads and diaphragms, great feedback for data curation (removing spuriously predictive lines/tubes). In pathology, attention maps on whole‑slide images helped surface regions for second reads, speeding pathologist workflow without claiming pixel‑perfect ground truth. Across studies, reader‑in‑the‑loop designs with XAI tend to improve efficiency and calibration, but not always AUC, so I measure time‑to‑decision, inter‑rater agreement, and override rates alongside accuracy (see recent clinical workflow evaluations in Nature/PMC open‑access reviews).

Explainable AI for Medical Language Models

Challenges in Interpreting LLM Outputs in Healthcare

LLMs add two headaches: hallucinations and provenance. A fluent answer without citations is liability waiting to happen. Even retrieval‑augmented generation (RAG) can confabulate if chunking, prompts, or date cutoffs are off. There’s also privacy, models might echo PHI if prompts include identifiers. Studies in JMIR AI (2024) and recent NIH‑funded evaluations (PMC 2024–2025) quantify non‑trivial hallucination rates on medical QA and guideline synthesis tasks.

Solutions to Improve Transparency: Source Attribution and Reasoning Chains

My playbook:

- Source attribution by default: every claim must link to a specific, time‑stamped source (guideline PDF, PubMed abstract). I log document IDs, page ranges, and retrieval scores to an audit table.

- Constrained generation: structured outputs (JSON with fields: question, answer, citations[], uncertainty, guardrails_triggered) beat free text for reviewability.

- Evidence‑first prompting: force the model to extract and quote evidence spans before answering: unanswered if confidence < threshold. This reduces unsupported statements.

- Logprobs and self‑consistency: I expose token‑level logprobs and use n‑best decoding agreement as a faithfulness signal.

- Red‑team tests: adversarial prompts (out‑of‑scope drugs, outdated guidelines) catch brittle behaviors.

Emerging work on faithful reasoning traces and citation grounding and Harvard Medical School insights on AI safety suggests we can boost clinician trust without revealing sensitive chain‑of‑thought, store the evidence and decision steps, not verbose inner monologues.

Balancing Model Accuracy with Explainability in Healthcare AI

Trade-Offs Between Complexity and Interpretability

I treat explainability as a performance dimension, not a bolt‑on. A practical recipe:

- Tiered modeling: start with an interpretable model: advance to a complex model only if it clears a pre‑registered margin (e.g., +0.03 AUROC, better calibration, lower false alarms per 100 patients).

- Uncertainty and calibration: I require well‑calibrated probabilities (ECE/Brier), prediction intervals, and abstention policies. Explanations without uncertainty mislead.

- Risk‑based UI: high‑risk predictions show richer explanations (feature attributions, similar patients, source docs): low‑risk ones show minimal cues to avoid cognitive overload.

Future Developments and Trends in XAI for Medical Applications

I’m watching four fronts:

- Causally aware XAI: counterfactuals that respect clinical plausibility (no “make the patient 20 years younger”) to support what‑if planning.

- Concept bottlenecks and prototypes: models that reason via clinician‑named concepts and exemplar cases, improving reviewability.

- Federated explainability: privacy‑preserving attributions aggregated across sites, aligning with GDPR and multi‑institution studies (see BMC 2025 trends).

- Standardized reporting: explanation model cards with stability tests, data drift sensitivity, and known failure modes, mirroring the growing literature (Nature/BMC/Frontiers 2024–2025) and anticipated regulator expectations.

Bottom line: explainable AI that stands up in clinics is specific, stable, and auditable. If your explanations can’t survive a unit rotation, a model update, and a regulator’s “show me,” they’re not ready for care.

Frequently Asked Questions

Why does explainable AI matter in healthcare beyond transparency?

Explainable AI is operational risk control. It helps clinicians judge whether to trust a prediction now and provides an auditable trail for regulators later. Specific, stable explanations tied to chart features calibrate trust—too vague gets ignored, too confident drives over‑reliance—supporting safer clinical decision support and compliance.

How should I use SHAP (and LIME) safely for EHR risk models?

Use a clinically representative background set and document its selection. Guard against leakage by restricting explanations to data available at decision time. Report both local and cohort‑level summaries to spot drift. LIME is fine for quick checks, but can be unstable with correlated features—treat it cautiously.

How can I validate heatmaps and attention maps in medical imaging XAI?

Run sanity checks: randomizing model weights should destroy the heatmap. Watch for confounders—bright regions can reflect devices or markers, not pathology. Pair saliency with reader‑in‑the‑loop evaluation, tracking time‑to‑decision, inter‑rater agreement, and override rates, since explainable AI can improve efficiency without necessarily boosting AUC.

What’s the best way to balance model accuracy with interpretability in clinical AI?

Adopt tiered modeling: start with interpretable baselines and advance to complex models only if they clear a pre‑registered margin (e.g., +0.03 AUROC) with better calibration. Require uncertainty estimates and abstention policies. Use risk‑based UI: show richer explanations for high‑risk outputs and minimal cues for low‑risk cases.

Does the EU AI Act or FDA require explainable AI, and what proof is needed?

For high‑risk systems, the EU AI Act emphasizes transparency and human oversight, while FDA GMLP, IEC 62304, and ISO 14971 expect risk controls. They don’t mandate a specific XAI method. Strong documentation—traceable explanations, data minimization, usability evidence, and post‑market monitoring—often matters more than the algorithmic flavor.

Disclaimer:

This article is intended solely for medical AI research and technical exchange purposes and does not constitute medical advice, diagnosis, treatment, or clinical decision-making guidance of any kind.

All technical methods, parameter settings, examples, and cases described in this article represent the author’s personal experience and opinions only. None of them have undergone prospective real-world clinical validation, local Institutional Review Board / Ethics Committee (IRB/IEC) approval, or clearance by the National Medical Products Administration (NMPA), U.S. Food and Drug Administration (FDA), CE marking, or any equivalent regulatory authority.

These contents must not be directly applied to any real-world clinical environment or patient care scenario.

Any institution or individual who references this article for the development, testing, or deployment of AI systems shall bear full and sole responsibility for all regulatory compliance, data privacy, safety, ethical, and legal obligations. DR7.ai and the author assume no liability whatsoever for any actions taken based on this content.

- The techniques described must not be referenced in any regulatory submission (FDA, EMA, NMPA, etc.) or used as evidence of compliance.

- Author and publisher assume no liability for any clinical, legal, or regulatory consequences arising from application of content.

Past Review: