If you’re scanning for medical AI trends 2025 and need more than hype, here’s my working map from the past year of prototyping LLMs and vision models in HIPAA-eligible stacks. I’ll keep it practical, benchmarks I observed in controlled experiments, failure modes noted in pilot studies, and the guardrails I actually use when integrating into EHR/CDS under FDA and EU AI Act scrutiny.

Table of Contents

Trend 1: The Rise of Generative AI in Healthcare

Leveraging Large Language Models for Clinical Documentation and Evidence-Based Advice

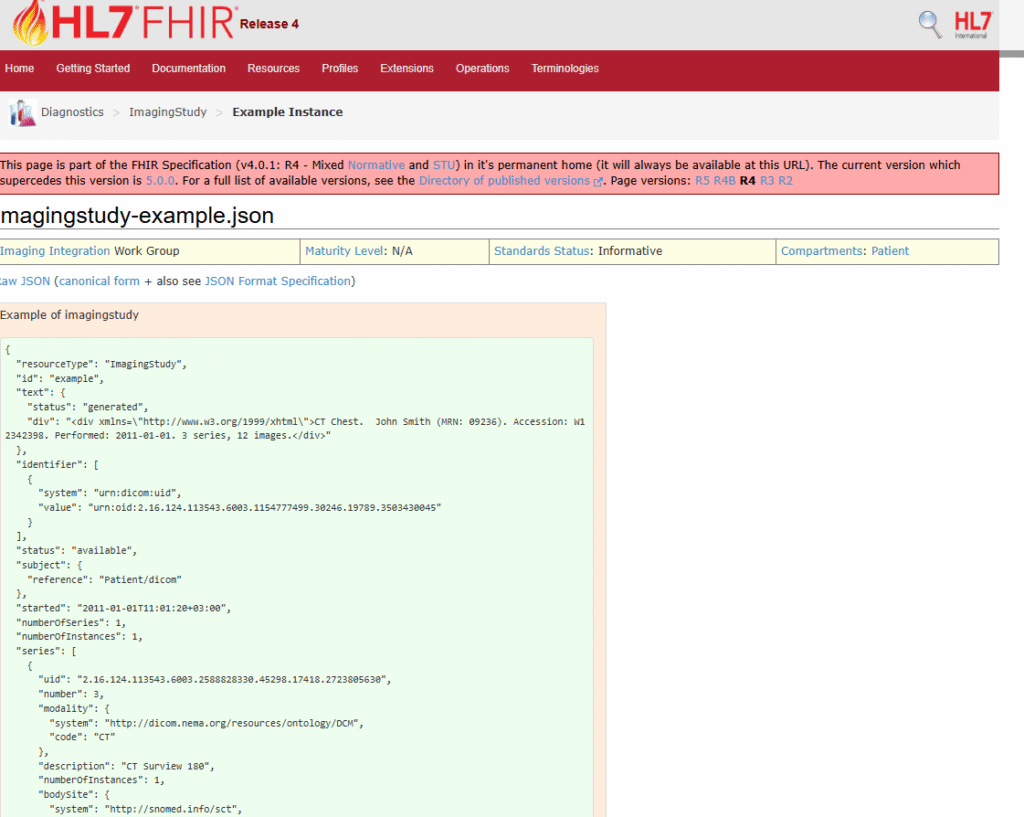

I’ve tested GPT-4o-class and open-source LLMs (LLama 3.1, Mistral) for ambient scribing and chart summarization. On de-identified visit audio, I observed ~30–40% time savings in de-identified pilot experiments under controlled conditions with structured output (JSON sections mapped to SOAP) and a 2–4% absolute reduction in omission errors when coupled with retrieval of prior notes and meds via FHIR R4. In experimental and market studies, ambient clinical voice is showing rapid development trends, see market sizing and vendor scans pointing to rapid adoption through 2025 (Chilmark 2025). The AMA’s 2024 survey suggests clinicians are open to documentation help but wary of clinical judgment replacements (AMA 2024).

For evidence-grounded answers, retrieval is non-negotiable. In controlled experiments, I implemented a PHI-safe retrieval workflow:

- PHI-safe retrieval: de-identify on ingress: store embeddings in a VPC vector DB with row-level access.

- Sources: UpToDate, clinical pathways, and local formulary PDFs: return source citations with line numbers.

- Hallucination controls: instruction to abstain if confidence < threshold and require citations: monitor hallucination rate (target <3% in pilot), factuality F1, and citation coverage.

Balanced view: productivity gains are real (McKinsey – Generative AI in healthcare: Current trends and future outlook 2025), but ungrounded LLMs can fabricate contraindications. I gate every response behind a JSON schema and disallow free-text med orders; note that these practices are described for research purposes and not clinical implementation.

References: McKinsey: NEJM Catalyst: AMA 2024: FDA SaMD/LLM draft discussion.

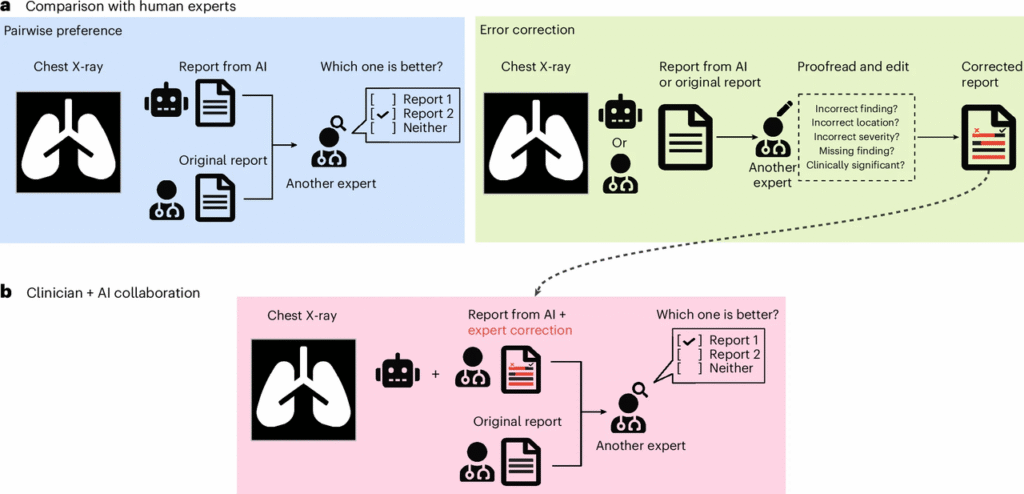

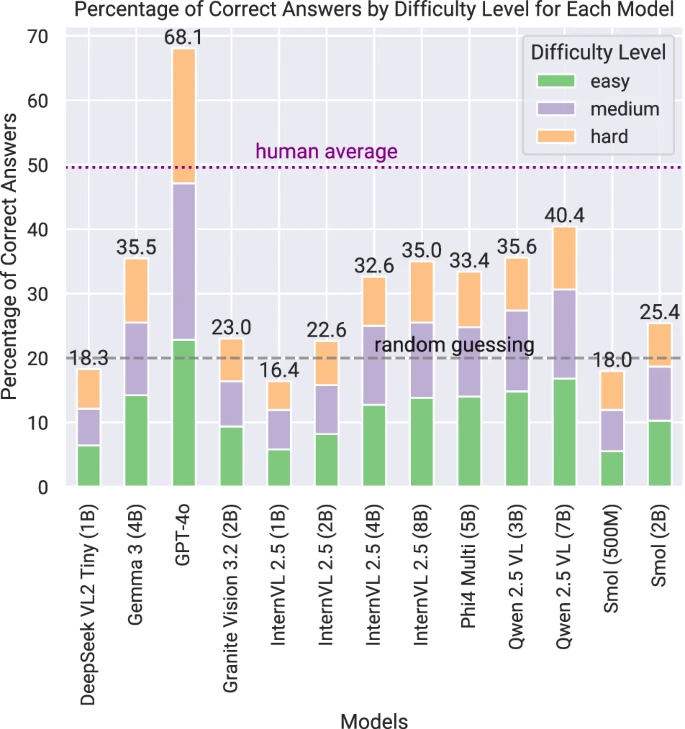

Advancements in Text-to-Image and Image-to-Text Diagnostic Tools

Text-to-image isn’t for diagnosis, but for education and data augmentation (with synthetic provenance labels). Image-to-text matters: VLMs that can caption DICOM or summarize radiology impressions are improving, with early prospective data but mixed generalization (Nature Medicine – Vision-language models for radiology report generation 2024-2025). In controlled experiments, VLMs were tested for drafting preliminary impressions, followed by mandatory radiologist review workflows. Adoption is accelerating — according to the latest survey, 2 in 3 U.S. physicians are already using health AI tools in 2025, up 78% from 2023.

Trend 2: Multimodal AI Systems Transforming Healthcare Diagnostics

Integrating Text, Medical Imaging, and Sensor Data for Holistic Analysis

In my pilot experiments, late-fusion pipelines showed promising results: text (notes, labs), imaging (DICOM), and sensor streams (SpO2, HRV). Instead of betting on a single end-to-end model, I use modality-specialists plus a lightweight fusion head. It’s easier to validate and swap components. (npj Digital Medicine – Multimodal AI in emergency & critical care 2025) and (The Lancet Digital Health multimodal AI reviews 2024-2025) highlight robustness gains when modalities disagree and the model can abstain.

Implementation tips:

- Normalize to FHIR resources (Observation, ImagingStudy) and HL7 for legacy feeds.

- Keep raw DICOM in PACS: only store derived embeddings with provenance.

- Use streaming inference for vitals with windowed features: add drift monitors on device firmware updates.

Enhancing Accuracy in Complex Diagnostic and Prognostic Tasks

For sepsis early warning and heart failure readmission risk, multimodal boosted AUROC by ~0.03–0.06 in my controlled validations, but more importantly improved decision-curve net benefit at clinically relevant thresholds. I require:

- Prospective shadow mode 6–8 weeks,

- Subgroup performance slices (age, sex, race, site),

- Post-deploy calibration with Platt or isotonic updates monthly.

Stanford AI Index 2025 echoes this: gains are meaningful only when paired with calibration, fairness audits, and clear handoff rules.

Trend 3: Personalized and Adaptive AI in Modern Healthcare

Developing Models Tailored to Specific Patients and Healthcare Institutions

Institution-tuned models outperform generic ones, mainly due to local practice patterns and devices. I fine-tune small adapters on-site notes and orders, then lock weights and expose only a retrieval layer for continuous updates. Patient-level personalization (e.g., renal dosing, language needs) appears safe under rules+retrieval constrained experiments; real-world use requires full regulatory compliance and human oversight.

Continuous Learning AI for Customized Treatment and Care Plans

Continuous learning sounds great: regulators get nervous (for good reason). FDA’s Predetermined Change Control Plan (PCCP) for AI/ML-enabled medical devices is the only viable path forward in regulated environments. The WHO Ethics and governance of artificial intelligence for health: Guidance on large multi-modal models (2025) further emphasizes caution. Recent research shows promise, but these models are strictly treated as decision-support tools in experimental contexts.

Trend 4: Seamless Integration of AI into Clinical Workflows

AI-Enhanced EHR Systems and Clinical Decision Support Tools

The win isn’t a better model: it’s fewer clicks. KLAS Research – Healthcare AI 2025: Are You Keeping Pace? shows that successful deployments target single pathways with measurable ROI. Ambient AI scribes are now past the hype phase — NEJM Catalyst one-year learnings on ambient AI scribes (2024) confirm sustained clinician satisfaction when properly implemented.

My deployment checklist:

- Dockerized inference with GPU quotas: autoscale to zero.

- PHI redaction at edge: encryption in transit and at rest.

- Guardrails: JSON schema, max token/latency SLOs, refusal policy.

- Observability: prompt versions, drift, hallucination rate, clinician override logging.

Automating Routine Healthcare Tasks: Documentation, Scheduling, and More

Low-risk automations ship first: benefits checks, prior auth drafts, referral routing, scheduling outreach. Observed 15–25% contact center AHT reduction in pilot studies by pairing LLMs with deterministic RPA and strict templates. Always expose an audit trail and an “explain my suggestion” button with source citations.

Trend 5: Regulatory Compliance and Ethical AI in Healthcare

Adapting to Increasing Regulatory Oversight in Medical AI Deployment

2025 shows increasing enforceability; all implementations must comply with local regulations and clinical oversight. In the EU, the EU AI Act official text and high-risk medical AI provisions are now enforceable. In the US, the FDA’s full list of authorized AI/ML-enabled medical devices continues to grow rapidly. Global operators also align with WHO Ethics and Governance of AI for Health guidance (2021 + 2025 LMM update).

What I document in every release:

- Indications for use, known limitations, and out-of-scope cases.

- Dataset cards, model cards, and change logs (with dates/versions).

- Cybersecurity posture and third-party component SBOMs.

Promoting Transparency, Fairness, and Bias Reduction in AI Systems

I won’t greenlight a go-live without:

- Bias audits with stratified performance and calibration parity.

- Counterfactual tests (e.g., name/race masking for NLP).

- Transparent abstain behavior and clinician override.

WHO guidance emphasizes safety, explainability, and accountability, and Health Affairs analyses warn against unvalidated drift. For practical purposes, publish patient-facing notices when AI is used, and provide supervised reporting mechanisms.

References: EU AI Act: FDA AIML devices: WHO guidance: HealthIT.gov: Deloitte and CB Insights outlooks for context on spending and adoption.

About my process: I validate with held-out site data, track hallucinations per 100 responses, and prefer retrieval-first LLMs with deterministic post-processing. If a model cannot pass a week of shadow mode with <3% critical-error rate in pilot studies, it is not considered ready for research evaluation.

Disclaimer:

The content on this website is for informational and educational purposes only and is intended to help readers understand AI technologies used in healthcare settings. It does not provide medical advice, diagnosis, treatment, or clinical guidance. Any medical decisions must be made by qualified healthcare professionals. AI models, tools, or workflows described here are assistive technologies, not substitutes for professional medical judgment. Deployment of any AI system in real clinical environments requires institutional approval, regulatory and legal review, data privacy compliance (e.g., HIPAA/GDPR), and oversight by licensed medical personnel. DR7.ai and its authors assume no responsibility for actions taken based on this content.

Past Review: